- Science Notes Posts

- Contact Science Notes

- Todd Helmenstine Biography

- Anne Helmenstine Biography

- Free Printable Periodic Tables (PDF and PNG)

- Periodic Table Wallpapers

- Interactive Periodic Table

- Periodic Table Posters

- How to Grow Crystals

- Chemistry Projects

- Fire and Flames Projects

- Holiday Science

- Chemistry Problems With Answers

- Physics Problems

- Unit Conversion Example Problems

- Chemistry Worksheets

- Biology Worksheets

- Periodic Table Worksheets

- Physical Science Worksheets

- Science Lab Worksheets

- My Amazon Books

Scientific Theory Definition and Examples

A scientific theory is a well-established explanation of some aspect of the natural world. Theories come from scientific data and multiple experiments. While it is not possible to prove a theory, a single contrary result using the scientific method can disprove it. In other words, a theory is testable and falsifiable.

Examples of Scientific Theories

There are many scientific theory in different disciplines:

- Astronomy : theory of stellar nucleosynthesis , theory of stellar evolution

- Biology : cell theory, theory of evolution, germ theory, dual inheritance theory

- Chemistry : atomic theory, Bronsted Lowry acid-base theory , kinetic molecular theory of gases , Lewis acid-base theory , molecular theory, valence bond theory

- Geology : climate change theory, plate tectonics theory

- Physics : Big Bang theory, perturbation theory, theory of relativity, quantum field theory

Criteria for a Theory

In order for an explanation of the natural world to be a theory, it meets certain criteria:

- A theory is falsifiable. At some point, a theory withstands testing and experimentation using the scientific method.

- A theory is supported by lots of independent evidence.

- A theory explains existing experimental results and predicts outcomes of new experiments at least as well as other theories.

Difference Between a Scientific Theory and Theory

Usually, a scientific theory is just called a theory. However, a theory in science means something different from the way most people use the word. For example, if frogs rain down from the sky, a person might observe the frogs and say, “I have a theory about why that happened.” While that theory might be an explanation, it is not based on multiple observations and experiments. It might not be testable and falsifiable. It’s not a scientific theory (although it could eventually become one).

Value of Disproven Theories

Even though some theories are incorrect, they often retain value.

For example, Arrhenius acid-base theory does not explain the behavior of chemicals lacking hydrogen that behave as acids. The Bronsted Lowry and Lewis theories do a better job of explaining this behavior. Yet, the Arrhenius theory predicts the behavior of most acids and is easier for people to understand.

Another example is the theory of Newtonian mechanics. The theory of relativity is much more inclusive than Newtonian mechanics, which breaks down in certain frames of reference or at speeds close to the speed of light . But, Newtonian mechanics is much simpler to understand and its equations apply to everyday behavior.

Difference Between a Scientific Theory and a Scientific Law

The scientific method leads to the formulation of both scientific theories and laws . Both theories and laws are falsifiable. Both theories and laws help with making predictions about the natural world. However, there is a key difference.

A theory explains why or how something works, while a law describes what happens without explaining it. Often, you see laws written in the form of equations or formulas.

Theories and laws are related, but theories never become laws or vice versa.

Theory vs Hypothesis

A hypothesis is a proposition that is tested via an experiment. A theory results from many, many tested hypotheses.

Theory vs Fact

Theories depend on facts, but the two words mean different things. A fact is an irrefutable piece of evidence or data. Facts never change. A theory, on the other hand, may be modified or disproven.

Difference Between a Theory and a Model

Both theories and models allow a scientist to form a hypothesis and make predictions about future outcomes. However, a theory both describes and explains, while a model only describes. For example, a model of the solar system shows the arrangement of planets and asteroids in a plane around the Sun, but it does not explain how or why they got into their positions.

- Frigg, Roman (2006). “ Scientific Representation and the Semantic View of Theories .” Theoria . 55 (2): 183–206.

- Halvorson, Hans (2012). “What Scientific Theories Could Not Be.” Philosophy of Science . 79 (2): 183–206. doi: 10.1086/664745

- McComas, William F. (December 30, 2013). The Language of Science Education: An Expanded Glossary of Key Terms and Concepts in Science Teaching and Learning . Springer Science & Business Media. ISBN 978-94-6209-497-0.

- National Academy of Sciences (US) (1999). Science and Creationism: A View from the National Academy of Sciences (2nd ed.). National Academies Press. doi: 10.17226/6024 ISBN 978-0-309-06406-4.

- Suppe, Frederick (1998). “Understanding Scientific Theories: An Assessment of Developments, 1969–1998.” Philosophy of Science . 67: S102–S115. doi: 10.1086/392812

Related Posts

- Venue Rentals

- Traveling Exhibitions

- Sue the T-Rex

- Evolutionary Biology

- Amphibians & Reptiles

- Cephalopods

What Do We Mean by “Theory” in Science?

A theory is a carefully thought-out explanation for observations of the natural world that has been constructed using the scientific method, and which brings together many facts and hypotheses.

In a previous blog post, I talked about the definition of “fact” in a scientific context , and discussed how facts differ from hypotheses and theories. The latter two terms also are well worth looking at in more detail because they are used differently by scientists and the general public, which can cause confusion when scientists talk about their work.

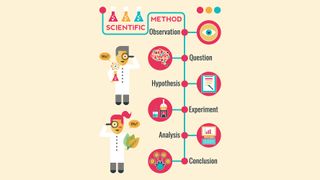

With these definitions in mind, a simplified version of the scientific process would be as follows. A scientist makes an observation of a natural phenomenon. She then devises a hypothesis about the explanation of the phenomenon, and she designs an experiment and/or collects additional data to test the hypothesis. If the test falsifies the hypothesis (i.e., shows that it is incorrect), she will have to develop a new hypothesis and test that. If the hypothesis is corroborated (i.e., not falsified) by the test, the scientist will retain it. If it survives additional scrutiny, she may eventually try to incorporate it into a larger theory that helps to explain her observed phenomenon and relate it to other phenomena.

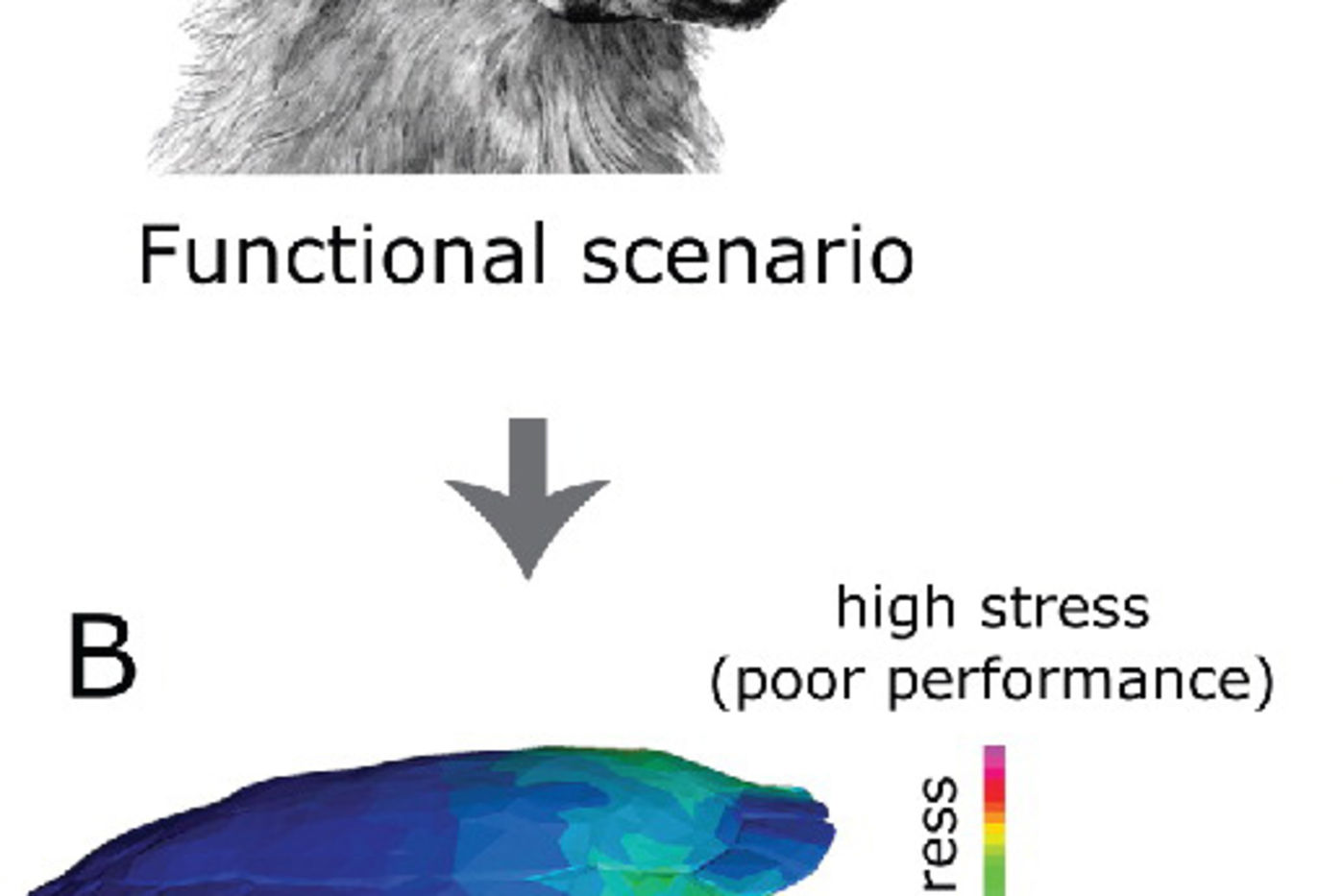

That's all fairly abstract, so let's look at a concrete example involving some recent research I undertook with a group of collaborators. The theory of evolution states that the process of natural selection should work to optimize the function of an organism's parts if the changes increase the chances of the organism successfully producing offspring and the changes are heritable (i.e., can be passed down from generation to generation).

But what happens when there are multiple selective pressures at work? We might hypothesize that turtles that spend most of their time in water face a trade-off between having a strong shell and one that is streamlined (making them more efficient swimmers), whereas streamlining would be less important to turtles on land, allowing them to evolve stronger shells even if they aren’t very streamlined.

Our results corroborated our hypothesis that aquatic turtles are forced to make more of a trade-off between strength and streamlining than turtles that live on land. In general, the shell shapes of our aquatic turtles were more streamlined but weaker than those of our land turtles, and our mathematical model of natural selection indicated that selection for streamlining was acting more strongly on the aquatic species.

As with any idea in science, our results are open to further testing. For example, other researchers might develop a better model of natural selection that shows that our model was overly simplistic. Or they might collect data from more turtle species that shows that our results were based on a false pattern stemming from sampling too few species (we considered 47 species in our dataset, about 14% of living turtle species). For now, though, our results can be added as a piece of evidence that is consistent with the predictions of the large explanatory theory of evolution.

If you would like to learn more about this research, the scientific paper describing the work can be found in the Journal of Vertebrate Paleontology . You can see some of the turtle specimens that we used in this research in The Field Museum's exhibition Specimens: Unlocking the Secrets of Life , open through January 7, 2018.

I am a paleobiologist interested in three main topics: 1) understanding the broad implications of the paleobiology and paleoecology of extinct terrestrial vertebrates, particularly in relation to large scale problems such as the evolution of herbivory and the nature of the end-Permian mass extinction; 2) using quantitative methods to document and interpret morphological evolution in fossil and extant vertebrates; and 3) tropic network-based approaches to paleoecology. To address these problems, I integrate data from a variety of biological and geological disciplines including biostratigraphy, anatomy, phylogenetic systematics and comparative methods, functional morphology, geometric morphometrics, and paleoecology.

A list of my publications can be found here.

More information on some of my research projects and other topics can be found on the fossil non-mammalian synapsid page.

Most of my research in vertebrate paleobiology focuses on anomodont therapsids, an extinct clade of non-mammalian synapsids ("mammal-like reptiles") that was one of the most diverse and successful groups of Permian and Triassic herbivores. Much of my dissertation research concentrated on reconstructing a detailed morphology-based phylogeny for Permian members of the clade, as well as using this as a framework for studying anomodont biogeography, the evolution of the group's distinctive feeding system, and anomodont-based biostratigraphic schemes. My more recent research on the group includes: species-level taxonomy of taxa such as Dicynodon , Dicynodontoides , Diictodon , Oudenodon , and Tropidostoma ; development of a higher-level taxonomy for anomodonts; testing whether anomodonts show morphological changes consistent with the hypothesis that end-Permian terrestrial vertebrate extinctions were caused by a rapid decline in atmospheric oxygen levels; descriptions of new or poorly-known anomodonts from Antarctica, Tanzania, and South Africa; and examination of the implications of high growth rates in anomodonts. Fieldwork is an important part of my paleontological research, and recent field areas include the Parnaíba Basin of Brazil , the Karoo Basin of South Africa, the Ruhuhu Basin of Tanzania , and the Luangwa Basin of Zambia. My collaborators and I have made important discoveries in the course of these field projects, including the first remains of dinocephalian synapsids from Tanzania and a dinosaur relative that implies that the two main lineages of archosaurs (one including crocodiles and their relatives and the other including birds and dinosaurs) were diversifying in the early Middle Triassic, only a few million years after the end-Permian extinction. Finally, the experience I have gained while studying Permian and Triassic terrestrial vertebrates forms the foundation for work I am now involved in using models of food webs to investigate how different kinds of biotic and abiotic perturbations could have caused extinctions in ancient communities.

Geometric morphometrics is the basis of most of my quantitative research on evolutionary morphology, and I have been using this technique to address several biological and paleontological questions. For example, I conducted a simulation-based study of how tectonic deformation influences our ability to extract biologically-relevant shape information from fossil specimens, and the effectiveness of different retrodeformation techniques. I also used the method to address taxonomic questions in biostratigraphically-important anomodont taxa, and I served as a co-advisor for a Ph.D. student at the University of Bristol who used geometric morphometrics and finite element analysis to examine the functional significance of skull shape variation in fossil and extant crocodiles. Focusing on more biological questions, I am currently working on a large geometric morphometric study of plastron shape in extant emydine turtles. To date, I have compiled a data set of over 1600 specimens belonging to nine species, and I am using these data to address causes of variation at both the intra- and interspecific level. Some of the main goals of the work are to examine whether plastron morphology reflects a phylogeographic signal identified using molecular data in Emys marmorata , whether the "miniaturized" turtles Glyptemys muhlenbergii and Clemmys guttata have ontogenies that differ from those of their larger relatives, and how habitat preference, phylogeny, and shell kinesis affect shell morphology.

A collaborative project that began during my time as a postdoctoral researcher at the California Academy of Sciences involves using using models of trophic networks to examine how disturbances can spread through communities and cause extinctions. Our model is based on ecological principles, and some of the main data that we are using are a series of Permian and Triassic communities from the Karoo Basin of South Africa. Our research has already shown that the latest Permian Karoo community was susceptible to collapse brought on by primary producer disruption, and that the earliest Triassic Karoo community was very unstable. Presently we are investigating the mechanics that underlie this instability, and we're planning to investigate how the perturbation resistance of communities as changed over time. We've also experimented with ways to use the model to estimate the magnitude and type of disruptions needed to cause observed extinction levels during the end-Permian extinction event in the Karoo. Then there's the research project I've been working on almost my whole life .

Morphology and the stratigraphic occurrences of fossil organisms provide distinct, but complementary information about evolutionary history. Therefore, it is important to consider both sources of information when reconstructing the phylogenetic relationships of organisms with a fossil record, and I am interested how these data sources can be used together in this process. In my empirical work on anomodont phylogeny, I have consistently examined the fit of my morphology-based phylogenetic hypotheses to the fossil record because simulation studies suggest that phylogenies which fit the record well are more likely to be correct. More theoretically, I developed a character-based approach to measuring the fit of phylogenies to the fossil record. I also have shown that measurements of the fit of phylogenetic hypotheses to the fossil record can provide insight into when the direct inclusion of stratigraphic data in the tree reconstruction process results in more accurate hypotheses. Most recently, I co-advised two masters students at the University of Bristol who are examined how our ability to accurately reconstruct a clade's phylogeny changes over the course of the clade's history.

Related content, Check this out

- More from M-W

- To save this word, you'll need to log in. Log In

Definition of theory

Did you know.

The Difference Between Hypothesis and Theory

A hypothesis is an assumption, an idea that is proposed for the sake of argument so that it can be tested to see if it might be true.

In the scientific method, the hypothesis is constructed before any applicable research has been done, apart from a basic background review. You ask a question, read up on what has been studied before, and then form a hypothesis.

A hypothesis is usually tentative; it's an assumption or suggestion made strictly for the objective of being tested.

A theory , in contrast, is a principle that has been formed as an attempt to explain things that have already been substantiated by data. It is used in the names of a number of principles accepted in the scientific community, such as the Big Bang Theory . Because of the rigors of experimentation and control, it is understood to be more likely to be true than a hypothesis is.

In non-scientific use, however, hypothesis and theory are often used interchangeably to mean simply an idea, speculation, or hunch, with theory being the more common choice.

Since this casual use does away with the distinctions upheld by the scientific community, hypothesis and theory are prone to being wrongly interpreted even when they are encountered in scientific contexts—or at least, contexts that allude to scientific study without making the critical distinction that scientists employ when weighing hypotheses and theories.

The most common occurrence is when theory is interpreted—and sometimes even gleefully seized upon—to mean something having less truth value than other scientific principles. (The word law applies to principles so firmly established that they are almost never questioned, such as the law of gravity.)

This mistake is one of projection: since we use theory in general to mean something lightly speculated, then it's implied that scientists must be talking about the same level of uncertainty when they use theory to refer to their well-tested and reasoned principles.

The distinction has come to the forefront particularly on occasions when the content of science curricula in schools has been challenged—notably, when a school board in Georgia put stickers on textbooks stating that evolution was "a theory, not a fact, regarding the origin of living things." As Kenneth R. Miller, a cell biologist at Brown University, has said , a theory "doesn’t mean a hunch or a guess. A theory is a system of explanations that ties together a whole bunch of facts. It not only explains those facts, but predicts what you ought to find from other observations and experiments.”

While theories are never completely infallible, they form the basis of scientific reasoning because, as Miller said "to the best of our ability, we’ve tested them, and they’ve held up."

Two Related, Yet Distinct, Meanings of Theory

There are many shades of meaning to the word theory . Most of these are used without difficulty, and we understand, based on the context in which they are found, what the intended meaning is. For instance, when we speak of music theory we understand it to be in reference to the underlying principles of the composition of music, and not in reference to some speculation about those principles.

However, there are two senses of theory which are sometimes troublesome. These are the senses which are defined as “a plausible or scientifically acceptable general principle or body of principles offered to explain phenomena” and “an unproven assumption; conjecture.” The second of these is occasionally misapplied in cases where the former is meant, as when a particular scientific theory is derided as "just a theory," implying that it is no more than speculation or conjecture . One may certainly disagree with scientists regarding their theories, but it is an inaccurate interpretation of language to regard their use of the word as implying a tentative hypothesis; the scientific use of theory is quite different than the speculative use of the word.

- proposition

- supposition

hypothesis , theory , law mean a formula derived by inference from scientific data that explains a principle operating in nature.

hypothesis implies insufficient evidence to provide more than a tentative explanation.

theory implies a greater range of evidence and greater likelihood of truth.

law implies a statement of order and relation in nature that has been found to be invariable under the same conditions.

Examples of theory in a Sentence

These examples are programmatically compiled from various online sources to illustrate current usage of the word 'theory.' Any opinions expressed in the examples do not represent those of Merriam-Webster or its editors. Send us feedback about these examples.

Word History

Late Latin theoria , from Greek theōria , from theōrein

1592, in the meaning defined at sense 6

Phrases Containing theory

- atomic theory

- auteur theory

- big bang theory

- Bohr theory

- catastrophe theory

- cell theory

- chaos theory

- conspiracy theory

- critical race theory

- decision theory

- devil theory

- domino theory

- field theory

- Galois theory

- game theory

- gauge theory

- general theory of relativity

- germ theory

- grand unified theory

- graph theory

- group theory

- information theory

- kinetic theory

- knot theory

- number theory

- quantity theory

- quantum field theory

- quantum theory

- queer theory

- special theory of relativity

- steady state theory

- string theory

- theory of games

- theory of numbers

- trickle - down theory

- undulatory theory

- wave theory

Articles Related to theory

The Words of the Week - Jan. 19

Dictionary lookups from entertainment, politics, and publishing

Words of the Week - March 18

Dictionary lookups from the Irish Prime Minister, Ukrainian defense measures, and the Ides of March

This is the Difference Between a...

This is the Difference Between a Hypothesis and a Theory

In scientific reasoning, they're two completely different things

Dictionary Entries Near theory

the Orthodox Church

theory of exchange

Cite this Entry

“Theory.” Merriam-Webster.com Dictionary , Merriam-Webster, https://www.merriam-webster.com/dictionary/theory. Accessed 17 May. 2024.

Kids Definition

Kids definition of theory.

from Latin theoria "a looking at or considering of facts, theory," from Greek theōria "theory, action of viewing, consideration," from theōrein "to look at, consider," — related to theater

Medical Definition

Medical definition of theory, more from merriam-webster on theory.

Nglish: Translation of theory for Spanish Speakers

Britannica English: Translation of theory for Arabic Speakers

Subscribe to America's largest dictionary and get thousands more definitions and advanced search—ad free!

Can you solve 4 words at once?

Word of the day.

See Definitions and Examples »

Get Word of the Day daily email!

Popular in Grammar & Usage

More commonly misspelled words, your vs. you're: how to use them correctly, every letter is silent, sometimes: a-z list of examples, more commonly mispronounced words, how to use em dashes (—), en dashes (–) , and hyphens (-), popular in wordplay, birds say the darndest things, a great big list of bread words, 10 scrabble words without any vowels, 12 more bird names that sound like insults (and sometimes are), 8 uncommon words related to love, games & quizzes.

Incorporate STEM journalism in your classroom

- Exercise type: Discussion

- Topic: Earth

- Category: Research & Design

How a scientific theory is born

- Download Student Worksheet

Directions for teachers:

Use the online Science News article “ How the Earth-shaking theory of plate tectonics was born ,” and the prompts below to have students explore scientific theories and determine the process behind creating theories. A version of the story, “Shaking up Earth,” appears in the January 16, 2021 issue of Science News . As a final exercise, have students discuss the definition of a scientific theory and compare it with hypotheses and scientific laws.

This story is the first installment in a series that celebrates Science News ’ upcoming 100th anniversary by highlighting some of the biggest advancements in science over the last century. For more on the story of plate tectonics, and to see the rest of series as it appears, visit Science News ’ Century of Science site at www.sciencenews.org/century .

Want to make it a virtual lesson? Post the online Science News article“ How the Earth-shaking theory of plate tectonics was born ,” to your learning management system. Pair up students and allow them to connect via virtual breakout rooms in a video conference, over the phone, in a shared document or using another chat system. Have each pair submit its answers to the second set of questions to you.

Thinking about theories

Discuss the following questions with a partner before reading the Science News article.

1. What does it mean to say that you have a theory about something? Think of a theory you’ve had about something outside of science.

Typically, when people say that they have theory, it means that they have an idea or philosophy. Student examples of theories will vary.

2. What is one scientific theory you have learned about this year in science? Explain what you remember about it.

Student answers will vary, but may include the general theory of relativity, evolution, etc.

3. How does the general use of the term theory differ from its use in a scientific context?

Theories in science are explanations rooted in data. Having a theory outside of the scientific context may be based on observations or data, or the term may be used to state a logical idea.

The theory of plate tectonics

Read the online Science News article “How plate tectonics upended our understanding of Earth,” and answer the following questions individually before discussing them as a class.

1. What is the theory of plate tectonics? Over how many years was it developed?

The theory of plate tectonics states that the Earth’s surface is broken up into various pieces (plates) and describes how and why they are constantly in motion and how that motion is linked to features seen on Earth. The theory was developed over about 50 years.

2. Who helped develop the theory and what did they contribute to it? What types of scientists were they and where were they from?

Meteorologist Alfred Wegner proposed the idea of continental drift in 1912, and geologist Arthur Holmes added to that proposal years later with an explanation for how the continents might drift. These ideas were the precursors to the development of the theory of plate tectonics. From there, seismologists, geophysicists, mathematicians and physicists established the ideas, such as seafloor spreading, and found the data necessary to develop the theory. Notable scientists include Lynn Sykes, Harry Hess, Robert S. Dietz, Robert Parker, W. Jason Morgan and Dan McKenzie. The researchers were from England and the United States.

3. Before the theory’s development, what were the conflicting lines of thought?

Wegner’s proposal sparked debates between mobilists, who supported the idea that the Earth’s surface was in motion, and fixists, who thought the Earth’s surface was static.

4. What did scientists need to resolve the conflict? Why did the conflict take so long to resolve?

In order to resolve the debate, scientists needed evidence. Wegner made his proposal in the early 1900s, but scientific evidence for why the continents move and how didn’t become available until after World War II, when technological advancements allowed scientists to study Earth’s surface and interior, and particularly the bottom of the oceans, in unprecedented detail.

5. How was evidence communicated to other members of the scientific community? Why was the communication important?

Evidence was communicated at conferences attended by scientists including geologists and geophysicists. By building on each other’s ideas and using each other’s data, the scientists were able to go beyond the idea of continental drift and come up with the unified theory of plate tectonics.

Defining a scientific theory

Discuss the following questions with a classmate.

1. Based on your answers to the questions above, how would you define a scientific theory?

A scientific theory is an explanation for how and why a natural phenomenon occurs based on evidence.

2. Think about a scientific hypothesis that you have written or look up an example of a hypothesis. How would you define a hypothesis? How is it different than a theory?

A hypothesis is a proposed explanation for a scientific question that hasn’t been validated with evidence. A theory relies on evidence to explain phenomena, whereas a hypothesis is proposed before the gathering of evidence. A hypothesis can become a theory once it is proven or disproven with supporting evidence.

Possible Extension

What is a scientific law that you have learned about in school? Explain how a scientific law is different than a scientific theory. For more information, watch this Ted-Ed video called “ What’s the difference between a scientific law and a theory? ” by educator Matt Anticole.

Student answers will vary, but could include Newton’s three laws of motion, Bernoulli’s principle, etc. A scientific law is different than a scientific theory in that it describes and predicts the relationships among variables, whereas a scientific theory describes how or why something happens.

Theory Definition in Science

jayk7 / Getty Images

- Chemical Laws

- Periodic Table

- Projects & Experiments

- Scientific Method

- Biochemistry

- Physical Chemistry

- Medical Chemistry

- Chemistry In Everyday Life

- Famous Chemists

- Activities for Kids

- Abbreviations & Acronyms

- Weather & Climate

- Ph.D., Biomedical Sciences, University of Tennessee at Knoxville

- B.A., Physics and Mathematics, Hastings College

The definition of a theory in science is very different from the everyday usage of the word. In fact, it's usually called a "scientific theory" to clarify the distinction. In the context of science, a theory is a well-established explanation for scientific data . Theories typically cannot be proven, but they can become established if they are tested by several different scientific investigators. A theory can be disproven by a single contrary result.

Key Takeaways: Scientific Theory

- In science, a theory is an explanation of the natural world that has been repeatedly tested and verified using the scientific method.

- In common usage, the word "theory" means something very different. It could refer to a speculative guess.

- Scientific theories are testable and falsifiable. That is, it's possible a theory might be disproven.

- Examples of theories include the theory of relativity and the theory of evolution.

There are many different examples of scientific theories in different disciplines. Examples include:

- Physics : the big bang theory , atomic theory , theory of relativity, quantum field theory

- Biology : the theory of evolution, cell theory, dual inheritance theory

- Chemistry : the kinetic theory of gases, valence bond theory , Lewis theory, molecular orbital theory

- Geology : plate tectonics theory

- Climatology : climate change theory

Key Criteria for a Theory

There are certain criteria which must be fulfilled for a description to be a theory. A theory is not simply any description that can be used to make predictions!

A theory must do all of the following:

- It must be well-supported by many independent pieces of evidence.

- It must be falsifiable. In other words, it must be possible to test a theory at some point.

- It must be consistent with existing experimental results and able to predict outcomes at least as accurately as any existing theories.

Some theories may be adapted or changed over time to better explain and predict behavior. A good theory can be used to predict natural events that have not occurred yet or have yet to be observed.

Value of Disproven Theories

Over time, some theories have been shown to be incorrect. However, not all discarded theories are useless.

For example, we now know Newtonian mechanics is incorrect under conditions approaching the speed of light and in certain frames of reference. The theory of relativity was proposed to better explain mechanics. Yet, at ordinary speeds, Newtonian mechanics accurately explains and predicts real-world behavior. Its equations are much easier to work with, so Newtonian mechanics remains in use for general physics.

In chemistry, there are many different theories of acids and bases. They involve different explanations for how acids and bases work (e.g., hydrogen ion transfer, proton transfer, electron transfer). Some theories, which are known to be incorrect under certain conditions, remain useful in predicting chemical behavior and making calculations.

Theory vs. Law

Both scientific theories and scientific laws are the result of testing hypotheses via the scientific method . Both theories and laws may be used to make predictions about natural behavior. However, theories explain why something works, while laws simply describe behavior under given conditions. Theories do not change into laws; laws do not change into theories. Both laws and theories may be falsified but contrary evidence.

Theory vs. Hypothesis

A hypothesis is a proposition which requires testing. Theories are the result of many tested hypotheses.

Theory vs Fact

While theories are well-supported and may be true, they are not the same as facts. Facts are irrefutable, while a contrary result may disprove a theory.

Theory vs. Model

Models and theories share common elements, but a theory both describes and explains while a model simply describes. Both models and theory may be used to make predictions and develop hypotheses.

- Frigg, Roman (2006). " Scientific Representation and the Semantic View of Theories ." Theoria . 55 (2): 183–206.

- Halvorson, Hans (2012). "What Scientific Theories Could Not Be." Philosophy of Science . 79 (2): 183–206. doi: 10.1086/664745

- McComas, William F. (December 30, 2013). The Language of Science Education: An Expanded Glossary of Key Terms and Concepts in Science Teaching and Learning . Springer Science & Business Media. ISBN 978-94-6209-497-0.

- National Academy of Sciences (US) (1999). Science and Creationism: A View from the National Academy of Sciences (2nd ed.). National Academies Press. doi: 10.17226/6024 ISBN 978-0-309-06406-4.

- Suppe, Frederick (1998). "Understanding Scientific Theories: An Assessment of Developments, 1969–1998." Philosophy of Science . 67: S102–S115. doi: 10.1086/392812

- Scientific Hypothesis, Model, Theory, and Law

- Hypothesis, Model, Theory, and Law

- What Is a Scientific or Natural Law?

- Hypothesis Definition (Science)

- Scientific Method Flow Chart

- Definition and Overview of Grounded Theory

- Six Steps of the Scientific Method

- Aether Definition in Alchemy and Science

- How Physics Works

- What Is a Hypothesis? (Science)

- What Is an Experiment? Definition and Design

- Null Hypothesis Definition and Examples

- Is Anthropology a Science?

- 6 Things You Should Know About Biological Evolution

- Geological Thinking: Method of Multiple Working Hypotheses

Theory n., plural: theories [ˈθɪɚ.i] Definition: a scientific explanation of a phenomenon based on a concurring set of scientific data from various independent studies

Table of Contents

Theory Definition

In science, a theory is a scientific explanation of a phenomenon. By scientific , it means it is an explanation or expectation based on a body of facts that have been repeatedly confirmed through methodical observations and experiments. For instance, in mathematics, a mathematical theory attempts to describe a particular class of constructs and includes axioms, theorems, examples, etc . In biology, a theory is a widely accepted explanation of a biological phenomenon based on sound evidence from rigorous empirical experiments and scientific observations. An example of a popular biological theory is Charles Darwin’s Theory of Evolution by Natural Selection . This theory attempts to explain evolution where natural selection is one of the vital mechanisms that drive organisms to progressively change over successive generations.

Etymology: from Latin theōria, Greek theōría, meaning “a viewing” or “contemplating”. Compare: hypothesis

Watch this vid about scientific theory:

Theory vs. Hypothesis

The term theory is generally used to imply speculation or assumption that has not been fully verified or has relatively limited proof. However, in science, an unproven idea or mere theoretical speculation is regarded as a hypothesis rather than a scientific theory.

A scientific hypothesis is a tentative explanation for a phenomenon and is yet to be tested through a scientific and methodological experiment. If it is confirmed by repetitive investigations and supports various independent studies, then, the hypothesis will likely be widely accepted by the scientific community, and through time — sans any scientific proof to debunk it — becomes a theory.

Theory vs. Law

Both scientific theories and laws are based on facts and are accepted by the scientific community as the truth. And both are used to make predictions of future events. However, while a scientific theory tells us why and how a phenomenon happens, a scientific law will tell us what to expect in a particular situation, especially through a mathematical equation . (MasterClass, 2020) Therefore, scientific theories and laws are essential in understanding things, particularly to grasp why they happen as they do and to reliably and factually predict results in a given situation or condition .

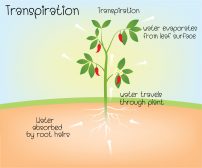

In biology, we have Mendel’s Laws that can be used as a basis for predicting the genotypes and the phenotypes of the offspring if it conforms to the Mendelian inheritance . Gregor Mendel formulated the Laws of heredity based on the patterns of trait variations, which he noticed when he conducted a series of breeding experiments on garden pea plants. Initially, his principles were not widely accepted until his works were rediscovered and reverified. Now, his principles on heredity are regarded as laws .

Although scientific theories and laws are accepted as scientific facts, they can be disproven when new evidence surfaces.

Mendel’s Laws, for instance, do not apply to some conditions, such as in the case of non-Mendelian inheritance . Codominance , incomplete dominance , and extranuclear inheritance are just some of the many examples of biological inheritance where Mendel’s Laws on heredity do not apply.

- MasterClass. (2020). Theory vs. Law: Basics of the Scientific Method . MasterClass; MasterClass. https://www.masterclass.com/articles/theory-vs-law-basics-of-the-scientific-method#4-examples-of-scientific-theories

Last updated on July 24th, 2022

You will also like...

Darwin and Natural Selection

Examples of Natural Selection

Genetics and Evolution

Water in Plants

Adaptation Tutorial

The Origins of Life

Related articles....

Theory of Neuroscience

A New Theory on the Origin of Animal Multicellularity

Prokaryotic Ancestor of Mitochondria: on the hunt

Nervous System

Why Non-Human Primates Don’t Speak Like Humans

Science and the scientific method: Definitions and examples

Here's a look at the foundation of doing science — the scientific method.

The scientific method

Hypothesis, theory and law, a brief history of science, additional resources, bibliography.

Science is a systematic and logical approach to discovering how things in the universe work. It is also the body of knowledge accumulated through the discoveries about all the things in the universe.

The word "science" is derived from the Latin word "scientia," which means knowledge based on demonstrable and reproducible data, according to the Merriam-Webster dictionary . True to this definition, science aims for measurable results through testing and analysis, a process known as the scientific method. Science is based on fact, not opinion or preferences. The process of science is designed to challenge ideas through research. One important aspect of the scientific process is that it focuses only on the natural world, according to the University of California, Berkeley . Anything that is considered supernatural, or beyond physical reality, does not fit into the definition of science.

When conducting research, scientists use the scientific method to collect measurable, empirical evidence in an experiment related to a hypothesis (often in the form of an if/then statement) that is designed to support or contradict a scientific theory .

"As a field biologist, my favorite part of the scientific method is being in the field collecting the data," Jaime Tanner, a professor of biology at Marlboro College, told Live Science. "But what really makes that fun is knowing that you are trying to answer an interesting question. So the first step in identifying questions and generating possible answers (hypotheses) is also very important and is a creative process. Then once you collect the data you analyze it to see if your hypothesis is supported or not."

The steps of the scientific method go something like this, according to Highline College :

- Make an observation or observations.

- Form a hypothesis — a tentative description of what's been observed, and make predictions based on that hypothesis.

- Test the hypothesis and predictions in an experiment that can be reproduced.

- Analyze the data and draw conclusions; accept or reject the hypothesis or modify the hypothesis if necessary.

- Reproduce the experiment until there are no discrepancies between observations and theory. "Replication of methods and results is my favorite step in the scientific method," Moshe Pritsker, a former post-doctoral researcher at Harvard Medical School and CEO of JoVE, told Live Science. "The reproducibility of published experiments is the foundation of science. No reproducibility — no science."

Some key underpinnings to the scientific method:

- The hypothesis must be testable and falsifiable, according to North Carolina State University . Falsifiable means that there must be a possible negative answer to the hypothesis.

- Research must involve deductive reasoning and inductive reasoning . Deductive reasoning is the process of using true premises to reach a logical true conclusion while inductive reasoning uses observations to infer an explanation for those observations.

- An experiment should include a dependent variable (which does not change) and an independent variable (which does change), according to the University of California, Santa Barbara .

- An experiment should include an experimental group and a control group. The control group is what the experimental group is compared against, according to Britannica .

The process of generating and testing a hypothesis forms the backbone of the scientific method. When an idea has been confirmed over many experiments, it can be called a scientific theory. While a theory provides an explanation for a phenomenon, a scientific law provides a description of a phenomenon, according to The University of Waikato . One example would be the law of conservation of energy, which is the first law of thermodynamics that says that energy can neither be created nor destroyed.

A law describes an observed phenomenon, but it doesn't explain why the phenomenon exists or what causes it. "In science, laws are a starting place," said Peter Coppinger, an associate professor of biology and biomedical engineering at the Rose-Hulman Institute of Technology. "From there, scientists can then ask the questions, 'Why and how?'"

Laws are generally considered to be without exception, though some laws have been modified over time after further testing found discrepancies. For instance, Newton's laws of motion describe everything we've observed in the macroscopic world, but they break down at the subatomic level.

This does not mean theories are not meaningful. For a hypothesis to become a theory, scientists must conduct rigorous testing, typically across multiple disciplines by separate groups of scientists. Saying something is "just a theory" confuses the scientific definition of "theory" with the layperson's definition. To most people a theory is a hunch. In science, a theory is the framework for observations and facts, Tanner told Live Science.

The earliest evidence of science can be found as far back as records exist. Early tablets contain numerals and information about the solar system , which were derived by using careful observation, prediction and testing of those predictions. Science became decidedly more "scientific" over time, however.

1200s: Robert Grosseteste developed the framework for the proper methods of modern scientific experimentation, according to the Stanford Encyclopedia of Philosophy. His works included the principle that an inquiry must be based on measurable evidence that is confirmed through testing.

1400s: Leonardo da Vinci began his notebooks in pursuit of evidence that the human body is microcosmic. The artist, scientist and mathematician also gathered information about optics and hydrodynamics.

1500s: Nicolaus Copernicus advanced the understanding of the solar system with his discovery of heliocentrism. This is a model in which Earth and the other planets revolve around the sun, which is the center of the solar system.

1600s: Johannes Kepler built upon those observations with his laws of planetary motion. Galileo Galilei improved on a new invention, the telescope, and used it to study the sun and planets. The 1600s also saw advancements in the study of physics as Isaac Newton developed his laws of motion.

1700s: Benjamin Franklin discovered that lightning is electrical. He also contributed to the study of oceanography and meteorology. The understanding of chemistry also evolved during this century as Antoine Lavoisier, dubbed the father of modern chemistry , developed the law of conservation of mass.

1800s: Milestones included Alessandro Volta's discoveries regarding electrochemical series, which led to the invention of the battery. John Dalton also introduced atomic theory, which stated that all matter is composed of atoms that combine to form molecules. The basis of modern study of genetics advanced as Gregor Mendel unveiled his laws of inheritance. Later in the century, Wilhelm Conrad Röntgen discovered X-rays , while George Ohm's law provided the basis for understanding how to harness electrical charges.

1900s: The discoveries of Albert Einstein , who is best known for his theory of relativity, dominated the beginning of the 20th century. Einstein's theory of relativity is actually two separate theories. His special theory of relativity, which he outlined in a 1905 paper, " The Electrodynamics of Moving Bodies ," concluded that time must change according to the speed of a moving object relative to the frame of reference of an observer. His second theory of general relativity, which he published as " The Foundation of the General Theory of Relativity ," advanced the idea that matter causes space to curve.

In 1952, Jonas Salk developed the polio vaccine , which reduced the incidence of polio in the United States by nearly 90%, according to Britannica . The following year, James D. Watson and Francis Crick discovered the structure of DNA , which is a double helix formed by base pairs attached to a sugar-phosphate backbone, according to the National Human Genome Research Institute .

2000s: The 21st century saw the first draft of the human genome completed, leading to a greater understanding of DNA. This advanced the study of genetics, its role in human biology and its use as a predictor of diseases and other disorders, according to the National Human Genome Research Institute .

- This video from City University of New York delves into the basics of what defines science.

- Learn about what makes science science in this book excerpt from Washington State University .

- This resource from the University of Michigan — Flint explains how to design your own scientific study.

Merriam-Webster Dictionary, Scientia. 2022. https://www.merriam-webster.com/dictionary/scientia

University of California, Berkeley, "Understanding Science: An Overview." 2022. https://undsci.berkeley.edu/article/0_0_0/intro_01

Highline College, "Scientific method." July 12, 2015. https://people.highline.edu/iglozman/classes/astronotes/scimeth.htm

North Carolina State University, "Science Scripts." https://projects.ncsu.edu/project/bio183de/Black/science/science_scripts.html

University of California, Santa Barbara. "What is an Independent variable?" October 31,2017. http://scienceline.ucsb.edu/getkey.php?key=6045

Encyclopedia Britannica, "Control group." May 14, 2020. https://www.britannica.com/science/control-group

The University of Waikato, "Scientific Hypothesis, Theories and Laws." https://sci.waikato.ac.nz/evolution/Theories.shtml

Stanford Encyclopedia of Philosophy, Robert Grosseteste. May 3, 2019. https://plato.stanford.edu/entries/grosseteste/

Encyclopedia Britannica, "Jonas Salk." October 21, 2021. https://www.britannica.com/ biography /Jonas-Salk

National Human Genome Research Institute, "Phosphate Backbone." https://www.genome.gov/genetics-glossary/Phosphate-Backbone

National Human Genome Research Institute, "What is the Human Genome Project?" https://www.genome.gov/human-genome-project/What

Live Science contributor Ashley Hamer updated this article on Jan. 16, 2022.

Sign up for the Live Science daily newsletter now

Get the world’s most fascinating discoveries delivered straight to your inbox.

Tree rings reveal summer 2023 was the hottest in 2 millennia

Aurora photos: Stunning northern lights glisten after biggest geomagnetic storm in 21 years

Jupiter's elusive 5th moon caught crossing the Great Red Spot in new NASA images

Most Popular

- 2 James Webb telescope measures the starlight around the universe's biggest, oldest black holes for 1st time ever

- 3 See stunning reconstruction of ancient Egyptian mummy that languished at an Australian high school for a century

- 4 China creates its largest ever quantum computing chip — and it could be key to building the nation's own 'quantum cloud'

- 5 James Webb telescope detects 1-of-a-kind atmosphere around 'Hell Planet' in distant star system

- 2 Newfound 'glitch' in Einstein's relativity could rewrite the rules of the universe, study suggests

- 3 Sun launches strongest solar flare of current cycle in monster X8.7-class eruption

- Daily Crossword

- Word Puzzle

- Word Finder

- Word of the Day

- Synonym of the Day

- Word of the Year

- Language stories

- All featured

- Gender and sexuality

- All pop culture

- Writing hub

- Grammar essentials

- Commonly confused

- All writing tips

- Pop culture

- Writing tips

Advertisement

[ thee - uh -ree , theer -ee ]

Einstein's theory of relativity.

Synonyms: doctrine , law , principle

Synonyms: thesis , postulate , concept , notion , idea

number theory.

music theory.

conflicting theories of how children best learn to read.

the theory that there is life on other planets.

Synonyms: view , deduction , conclusion , judgment , opinion , thought

My theory is that he never stops to think words have consequences.

Synonyms: presumption , supposition , surmise , hypothesis

- a system of rules, procedures, and assumptions used to produce a result

- abstract knowledge or reasoning

I have a theory about that

- an ideal or hypothetical situation (esp in the phrase in theory )

the theory of relativity

- a nontechnical name for hypothesis

/ thē ′ ə-rē,thîr ′ ē /

- A set of statements or principles devised to explain a group of facts or phenomena. Most theories that are accepted by scientists have been repeatedly tested by experiments and can be used to make predictions about natural phenomena.

- See Note at hypothesis

- In science, an explanation or model that covers a substantial group of occurrences in nature and has been confirmed by a substantial number of experiments and observations. A theory is more general and better verified than a hypothesis . ( See Big Bang theory , evolution , and relativity .)

Discover More

Word history and origins.

Origin of theory 1

Idioms and Phrases

In theory, mapping the human genome may lead to thousands of cures.

Synonym Study

Example sentences.

“Our prosecutors have all too often inserted themselves into the political process based on the flimsiest of legal theories,” Barr went on.

Turn Wilson’s mathematical crank, and you get a related theory describing groups of those pieces — perhaps billiard ball molecules.

She also learns immediately that this theory is “not just incorrect but hateful, like saying that different races had different IQs” — and yet, “in my heart, I knew that Whorf was right,” that language does change the way you think.

It applies a different random error to each piece of information that’s encoded—which in theory makes it impossible to break without knowing the key.

Kindrachuk also works on ebola, and he says over the years many such theories have been put forth in scientific journals without provoking this kind of response.

But at the heart of this “Truther” conspiracy theory is the idea that “someone” wants to destroy Bill Cosby.

Is it sort of evidence of the Gladwellian 10,000 hours theory?

But a 2011 study of genetic evidence from 30 ethnic groups in India disproved this theory.

But, in theory, that started to change last week with the first meeting of SIX, the State Innovation Exchange.

So I was happy to see that the European theory of terroir was in action, promoting with pride the qualities of a specific region.

In the year of misery, of agony and suffering in general he had endured, he had settled upon one theory.

Dean Swift was indeed a misanthrope by theory, however he may have made exception to private life.

The other is the new theory: that the Bible is the work of many men whom God had inspired to speak or write the truth.

The evolution theory alleges that they were evolved, slowly, by natural processes out of previously existing matter.

And our surroundings at that particular moment were not the most favorable to coherent thought or plausible theory-building.

Related Words

- speculation

Definitions and idiom definitions from Dictionary.com Unabridged, based on the Random House Unabridged Dictionary, © Random House, Inc. 2023

Idioms from The American Heritage® Idioms Dictionary copyright © 2002, 2001, 1995 by Houghton Mifflin Harcourt Publishing Company. Published by Houghton Mifflin Harcourt Publishing Company.

- Skip to main content

- Keyboard shortcuts for audio player

13.7 Cosmos & Culture

Why is 'theory' such a confusing word.

Marcelo Gleiser

Theoretically speaking, there is widespread confusion about the word "theory." Right?

Many people interpret the word as iffy knowledge, based mostly on speculative thinking. It is used indiscriminately to indicate things we know — that is, based on solid empirical evidence — and things we aren't sure about. Not a good mix at all, especially when certain theories speak directly to people's religious and value-based sensitivities, such as the "theory of evolution" or "Big Bang theory." There is also the danger of falling for meaning traps set by groups with specific agendas.

Looking at the New Oxford American Dictionary (NOAD) listing for "theory" doesn't help:

- a supposition or a system of ideas intended to explain something, especially one based on general principles independent of the thing to be explained: Darwin's theory of evolution .

- A set of principles on which the practice of an activity is based: a theory of education .

- An idea used to account for a situation or justify a course of action: my theory would be that...

So, there is usage within a scientific context ("the theory of...") and in a subjective context ("my theory is...") — an obvious problem.

When used in the context of a phrase, as "in theory," it gets worse. According to NOAD, "used in describing what is supposed to happen or be possible, usually with the implication that it does not in fact happen." [My italics.] Clearly, in this context, "in theory" means something that is probably wrong.

No wonder there is confusion. It is confusing!

A first step in trying to clarify the meaning(s) of theory is to understand in which context the word is being used, and to keep different contexts separate. So, if a scientist is using the word theory, as in "theory of relativity," "theory of evolution," or "Big Bang theory," it should be understood as a statement within a scientific context. In this case, a theory is certainly NOT mere subjective speculation, or something that is probably wrong, but, quite the contrary, something that has been scrutinized by the scientific process of empirical validation and has, so far, passed the test of explaining the data.

Unfortunately, even within the scientific context the word is misused, which only adds to the confusion. For example, "superstring theory" refers to a speculative theory in high-energy physics where the fundamental building blocks of matter are not elementary particles but tiny vibrating tubes of energy. Given the lack of empirical support so far for the idea, "superstring hypothesis" would be a much more appropriate characterization. Scientists may know the status of the hypothesis, but most people won't. We should be more careful.

A scientific theory is an accumulated body of knowledge constructed to describe specific natural phenomena, such as the force of gravity or biodiversity, that has been vetted by the scientific community. It is the best that we can come up with to make sense of nature at a given time.

Mind you, as our understanding of natural phenomena change, theories can change as well. This doesn't necessarily mean that the old theories are wrong . It usually means that the old theories have a limited range of validity not covered by newly discovered phenomena. For example, Newton's theory of gravity works really well to send rocket ships to Neptune, but not to describe a black hole. New theories are born from the cracks in old ones.

Unfortunately, suspicion of certain scientific theories can come from confusing subjective speculation with objective description. A scientific theory is different from a scientific hypothesis. A scientific hypothesis is an idea not yet empirically tested and, hence, still not vetted by the scientific community. A theory is a hypothesis that has been tested and vetted.

Much popular confusion could be avoided if the word theory would be understood within the right context. The often-used trap of exploring the double meaning of the word theory to confuse or willfully misguide popular opinion should only catch those who don't know, or choose to neglect, what theory means within its scientific or subjective context.

Marcelo Gleiser is a theoretical physicist and cosmologist — and professor of natural philosophy, physics and astronomy at Dartmouth College. He is the co-founder of 13.7, a prolific author of papers and essays, and active promoter of science to the general public. His latest book is The Island of Knowledge: The Limits of Science and the Search for Meaning . You can keep up with Marcelo on Facebook and Twitter: @mgleiser .

- scientific context

April 2, 2013

"Just a Theory": 7 Misused Science Words

From "significant" to "natural," here are seven scientific terms that can prove troublesome for the public and across research disciplines

By Tia Ghose & LiveScience

Hypothesis. Theory. Law. These scientific words get bandied about regularly, yet the general public usually gets their meaning wrong.

Now, one scientist is arguing that people should do away with these misunderstood words altogether and replace them with the word "model." But those aren't the only science words that cause trouble, and simply replacing the words with others will just lead to new, widely misunderstood terms, several other scientists said.

"A word like 'theory' is a technical scientific term," said Michael Fayer, a chemist at Stanford University. "The fact that many people understand its scientific meaning incorrectly does not mean we should stop using it. It means we need better scientific education ."

On supporting science journalism

If you're enjoying this article, consider supporting our award-winning journalism by subscribing . By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

From "theory" to "significant," here are seven scientific words that are often misused.

1. Hypothesis

The general public so widely misuses the words hypothesis , theory and law that scientists should stop using these terms, writes physicist Rhett Allain of Southeastern Louisiana University, in a blog post on Wired Science. [ Amazing Science: 25 Fun Facts ]

"I don't think at this point it's worth saving those words," Allain told LiveScience.

A hypothesis is a proposed explanation for something that can actually be tested. But "if you just ask anyone what a hypothesis is, they just immediately say 'educated guess,'" Allain said.

2. Just a theory?

Climate-change deniers and creationists have deployed the word "theory" to cast doubt on climate change and evolution.

"It's as though it weren't true because it's just a theory," Allain said.

That's despite the fact that an overwhelming amount of evidence supports both human-caused climate change and Darwin's theory of evolution .

Part of the problem is that the word "theory" means something very different in lay language than it does in science: A scientific theory is an explanation of some aspect of the natural world that has been substantiated through repeated experiments or testing. But to the average Jane or Joe, a theory is just an idea that lives in someone's head, rather than an explanation rooted in experiment and testing.

However, theory isn't the only science phrase that causes trouble. Even Allain's preferred term to replace hypothesis, theory and law -- "model" -- has its troubles. The word not only refers to toy cars and runway walkers, but also means different things in different scientific fields. A climate model is very different from a mathematical model, for instance.

"Scientists in different fields use these terms differently from each other," John Hawks, an anthropologist at the University of Wisconsin-Madison, wrote in an email to LiveScience. "I don't think that 'model' improves matters. It has an appearance of solidity in physics right now mainly because of the Standard Model. By contrast, in genetics and evolution, 'models' are used very differently." (The Standard Model is the dominant theory governing particle physics.)

When people don't accept human-caused climate change, the media often describes those individuals as " climate skeptics ." But that may give them too much credit, Michael Mann, a climate scientist at Pennsylvania State University, wrote in an email.

"Simply denying mainstream science based on flimsy, invalid and too-often agenda-driven critiques of science is not skepticism at all. It is contrarianism ... or denial," Mann told LiveScience.

Instead, true skeptics are open to scientific evidence and are willing to evenly assess it.

"All scientists should be skeptics. True skepticism is, as [Carl] Sagan described it, the 'self-correcting machinery' of science," Mann said.

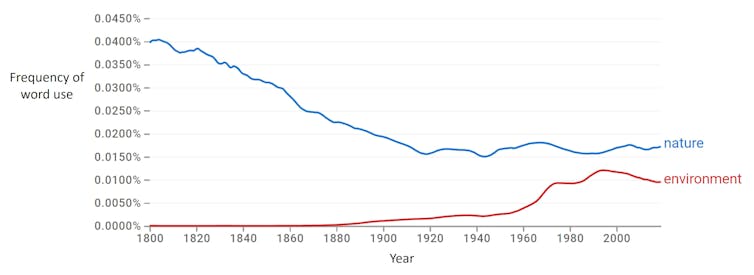

5. Nature vs. nurture

The phrase " nature versus nurture " also gives scientists a headache, because it radically simplifies a very complicated process, said Dan Kruger, an evolutionary biologist at the University of Michigan.

"This is something that modern evolutionists cringe at," Kruger told LiveScience.

Genes may influence human beings, but so, too, do epigenetic changes . These modifications alter which genes get turned on, and are both heritable and easily influenced by the environment. The environment that shapes human behavior can be anything from the chemicals a fetus is exposed to in the womb to the block a person grew up on to the type of food they ate as a child, Kruger said. All these factors interact in a messy, unpredictable way.

6. Significant

Another word that sets scientists' teeth on edge is "significant."

"That's a huge weasel word. Does it mean statistically significant, or does it mean important?" said Michael O'Brien, the dean of the College of Arts and Science at the University of Missouri.

In statistics, something is significant if a difference is unlikely to be due to random chance. But that may not translate into a meaningful difference, in, say, headache symptoms or IQ.

"Natural" is another bugaboo for scientists. The term has become synonymous with being virtuous, healthy or good. But not everything artificial is unhealthy, and not everything that's natural is good for you .

"Uranium is natural, and if you inject enough of it, you're going to die," Kruger said.

Natural's sibling "organic" also has a problematic meaning, he said. While organic simply means "carbon-based" to scientists, the term is now used to describe pesticide-free peaches and high-end cotton sheets, as well.

Bad education

But though these words may be routinely misunderstood, the real problem, scientists say, is that people don't get rigorous science education in middle school and high school. As a result, the public doesn't understand how scientific explanations are formed , tested and accepted.

What's more, the human brain may not have evolved to intuitively understand key scientific concepts such as hypotheses or theories, Kruger said.

Most people tend to use mental shortcuts to make sense of the cacophony of information they're presented with every day.

One of those tendencies is to make a "binary distinction between something that is true in an absolute sense and something that's false or a lie," Kruger said. "With science, it's more of a continuum. We're continually building our understanding."

Top 10 Mysteries of the Mind

The Reality of Climate Change: 10 Myths Busted

Know Your Roots? Human Evolution Quiz

Copyright 2013 LiveScience, a TechMediaNetwork company. All rights reserved. This material may not be published, broadcast, rewritten or redistributed.

Definitions of Fact, Theory, and Law in Scientific Work

Science uses specialized terms that have different meanings than everyday usage. These definitions correspond to the way scientists typically use these terms in the context of their work. Note, especially, that the meaning of “theory” in science is different than the meaning of “theory” in everyday conversation.

- Fact: In science, an observation that has been repeatedly confirmed and for all practical purposes is accepted as “true.” Truth in science, however, is never final and what is accepted as a fact today may be modified or even discarded tomorrow.

- Hypothesis: A tentative statement about the natural world leading to deductions that can be tested. If the deductions are verified, the hypothesis is provisionally corroborated. If the deductions are incorrect, the original hypothesis is proved false and must be abandoned or modified. Hypotheses can be used to build more complex inferences and explanations.

- Law: A descriptive generalization about how some aspect of the natural world behaves under stated circumstances.

- Theory: In science, a well-substantiated explanation of some aspect of the natural world that can incorporate facts, laws, inferences, and tested hypotheses.

The Role of Theory in Advancing 21st-Century Biology , National Academy of Sciences Teaching About Evolution and the Nature of Science , National Academy of Sciences

Science education is constantly evolving! Want to keep up?

Subscribe to our newsletter for the latest news, events, and resources from NCSE.

Support Accurate Evolution Education

Help NCSE ensure every student gets a great evolution education, no matter where they live.

- Table of Contents

- Random Entry

- Chronological

- Editorial Information

- About the SEP

- Editorial Board

- How to Cite the SEP

- Special Characters

- Advanced Tools

- Support the SEP

- PDFs for SEP Friends

- Make a Donation

- SEPIA for Libraries

- Entry Contents

Bibliography

Academic tools.

- Friends PDF Preview

- Author and Citation Info

- Back to Top

Word Meaning

Word meaning has played a somewhat marginal role in early contemporary philosophy of language, which was primarily concerned with the structural features of sentence meaning and showed less interest in the nature of the word-level input to compositional processes. Nowadays, it is well-established that the study of word meaning is crucial to the inquiry into the fundamental properties of human language. This entry provides an overview of the way issues related to word meaning have been explored in analytic philosophy and a summary of relevant research on the subject in neighboring scientific domains. Though the main focus will be on philosophical problems, contributions from linguistics, psychology, neuroscience and artificial intelligence will also be considered, since research on word meaning is highly interdisciplinary.

1.1 The Notion of Word

1.2 theories of word meaning, 2.1 classical traditions, 2.2 historical-philological semantics, 3.1 early contemporary views, 3.2 grounding and lexical competence, 3.3 the externalist turn, 3.4 internalism, 3.5 contextualism, minimalism, and the lexicon, 4.1 structuralist semantics, 4.2 generativist semantics, 4.3 decompositional approaches, 4.4 relational approaches, 5.1 cognitive linguistics, 5.2 psycholinguistics, 5.3 neurolinguistics, other internet resources, related entries.

The notions of word and word meaning are problematic to pin down, and this is reflected in the difficulties one encounters in defining the basic terminology of lexical semantics. In part, this depends on the fact that the term ‘word’ itself is highly polysemous (see, e.g., Matthews 1991; Booij 2007; Lieber 2010). For example, in ordinary parlance ‘word’ is ambiguous between a type-level reading (as in “ Color and colour are spellings of the same word”), an occurrence-level reading (as in “there are thirteen words in the tongue-twister How much wood would a woodchuck chuck if a woodchuck could chuck wood? ”), and a token-level reading (as in “John erased the last two words on the blackboard”). Before proceeding further, let us then elucidate the notion of word in more detail ( Section 1.1 ), and lay out the key questions that will guide our discussion of word meaning in the rest of the entry ( Section 1.2 ).

We can distinguish two fundamental approaches to the notion of word. On one side, we have linguistic approaches, which characterize the notion of word by reflecting on its explanatory role in linguistic research (for a survey on explanation in linguistics, see Egré 2015). These approaches often end up splitting the notion of word into a number of more fine-grained and theoretically manageable notions, but still tend to regard ‘word’ as a term that zeroes in on a scientifically respectable concept (e.g., Di Sciullo & Williams 1987). For example, words are the primary locus of stress and tone assignment, the basic domain of morphological conditions on affixation, clitization, compounding, and the theme of phonological and morphological processes of assimilation, vowel shift, metathesis, and reduplication (Bromberger 2011).

On the other side, we have metaphysical approaches, which attempt to pin down the notion of word by inquiring into the metaphysical nature of words. These approaches typically deal with such questions as “what are words?”, “how should words be individuated?”, and “on what conditions two utterances count as utterances of the same word?”. For example, Kaplan (1990, 2011) has proposed to replace the orthodox type-token account of the relation between words and word tokens with a “common currency” view on which words relate to their tokens as continuants relate to stages in four-dimensionalist metaphysics (see the entries on types and tokens and identity over time ). Other contributions to this debate can be found, a.o., in McCulloch (1991), Cappelen (1999), Alward (2005), Hawthorne & Lepore (2011), Sainsbury & Tye (2012), Gasparri (2016), and Irmak (forthcoming).

For the purposes of this entry, we can rely on the following stipulation. Every natural language has a lexicon organized into lexical entries , which contain information about word types or lexemes . These are the smallest linguistic expressions that are conventionally associated with a non-compositional meaning and can be articulated in isolation to convey semantic content. Word types relate to word tokens and occurrences just like phonemes relate to phones in phonological theory. To understand the parallelism, think of the variations in the place of articulation of the phoneme /n/, which is pronounced as the voiced bilabial nasal [m] in “ten bags” and as the voiced velar nasal [ŋ] in “ten gates”. Just as phonemes are abstract representations of sets of phones (each defining one way the phoneme can be instantiated in speech), lexemes can be defined as abstract representations of sets of words (each defining one way the lexeme can be instantiated in sentences). Thus, ‘do’, ‘does’, ‘done’ and ‘doing’ are morphologically and graphically marked realizations of the same abstract word type do . To wrap everything into a single formula, we can say that the lexical entries listed in a lexicon set the parameters defining the instantiation potential of word types in sentences, utterances and inscriptions (cf. Murphy 2010). In what follows, unless otherwise indicated, our talk of “word meaning” should be understood as talk of “word type meaning” or “lexeme meaning”, in the sense we just illustrated.

As with general theories of meaning (see the entry on theories of meaning ), two kinds of theory of word meaning can be distinguished. The first kind, which we can label a semantic theory of word meaning, is a theory interested in clarifying what meaning-determining information is encoded by the words of a natural language. A framework establishing that the word ‘bachelor’ encodes the lexical concept adult unmarried male would be an example of a semantic theory of word meaning. The second kind, which we can label a foundational theory of word meaning, is a theory interested in elucidating the facts in virtue of which words come to have the semantic properties they have for their users. A framework investigating the dynamics of semantic change and social coordination in virtue of which the word ‘bachelor’ is assigned the function of expressing the lexical concept adult unmarried male would be an example of a foundational theory of word meaning. Likewise, it would be the job of a foundational theory of word meaning to determine whether words have the semantic properties they have in virtue of social conventions, or whether social conventions do not provide explanatory purchase on the facts that ground word meaning (see the entry on convention ).

Obviously, the endorsement of a given semantic theory is bound to place important constraints on the claims one might propose about the foundational attributes of word meaning, and vice versa . Semantic and foundational concerns are often interdependent, and it is difficult to find theories of word meaning which are either purely semantic or purely foundational. According to Ludlow (2014), for example, the fact that word meaning is systematically underdetermined (a semantic matter) can be explained in part by looking at the processes of linguistic negotiation whereby discourse partners converge on the assignment of shared meanings to the words of their language (a foundational matter). However, semantic and foundational theories remain in principle different and designed to answer partly non-overlapping sets of questions.

Our focus in this entry will be on semantic theories of word meaning, i.e., on theories that try to provide an answer to such questions as “what is the nature of word meaning?”, “what do we know when we know the meaning of a word?”, and “what (kind of) information must a speaker associate to the words of a language in order to be a competent user of its lexicon?”. However, we will engage in foundational considerations whenever necessary to clarify how a given framework addresses issues in the domain of a semantic theory of word meaning.

2. Historical Background

The study of word meaning became a mature academic enterprise in the 19 th century, with the birth of historical-philological semantics ( Section 2.2 ). Yet, matters related to word meaning had been the subject of much debate in earlier times. We can distinguish three major classical approaches to word meaning: speculative etymology, rhetoric, and classical lexicography (Meier-Oeser 2011; Geeraerts 2013). We describe them briefly in Section 2.1 .