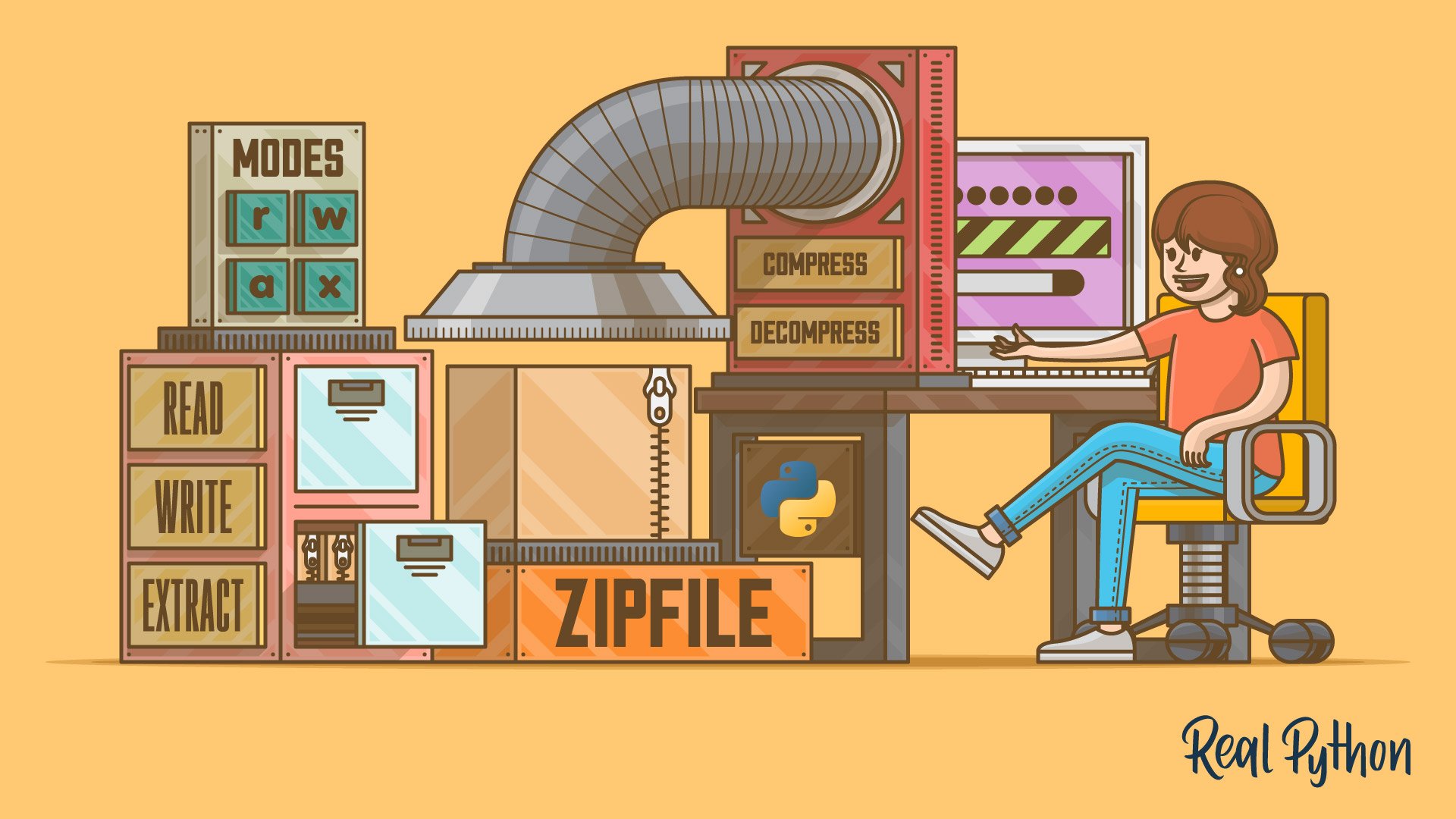

Python's zipfile: Manipulate Your ZIP Files Efficiently

Table of Contents

What Is a ZIP File?

Why use zip files, can python manipulate zip files, opening zip files for reading and writing, reading metadata from zip files, reading from and writing to member files, reading the content of member files as text, extracting member files from your zip archives, closing zip files after use, creating a zip file from multiple regular files, building a zip file from a directory, compressing files and directories, creating zip files sequentially, extracting files and directories, finding path in a zip file, building importable zip files with pyzipfile, running zipfile from your command line, using other libraries to manage zip files.

Watch Now This tutorial has a related video course created by the Real Python team. Watch it together with the written tutorial to deepen your understanding: Manipulating ZIP Files With Python

Python’s zipfile is a standard library module intended to manipulate ZIP files . This file format is a widely adopted industry standard when it comes to archiving and compressing digital data. You can use it to package together several related files. It also allows you to reduce the size of your files and save disk space. Most importantly, it facilitates data exchange over computer networks.

Knowing how to create, read, write, populate, extract, and list ZIP files using the zipfile module is a useful skill to have as a Python developer or a DevOps engineer.

In this tutorial, you’ll learn how to:

- Read, write, and extract files from ZIP files with Python’s zipfile

- Read metadata about the content of ZIP files using zipfile

- Use zipfile to manipulate member files in existing ZIP files

- Create new ZIP files to archive and compress files

If you commonly deal with ZIP files, then this knowledge can help to streamline your workflow to process your files confidently.

To get the most out of this tutorial, you should know the basics of working with files , using the with statement , handling file system paths with pathlib , and working with classes and object-oriented programming .

To get the files and archives that you’ll use to code the examples in this tutorial, click the link below:

Get Materials: Click here to get a copy of the files and archives that you’ll use to run the examples in this zipfile tutorial.

Getting Started With ZIP Files

ZIP files are a well-known and popular tool in today’s digital world. These files are fairly popular and widely used for cross-platform data exchange over computer networks, notably the Internet.

You can use ZIP files for bundling regular files together into a single archive, compressing your data to save some disk space, distributing your digital products, and more. In this tutorial, you’ll learn how to manipulate ZIP files using Python’s zipfile module.

Because the terminology around ZIP files can be confusing at times, this tutorial will stick to the following conventions regarding terminology:

| Term | Meaning |

|---|---|

| ZIP file, ZIP archive, or archive | A physical file that uses the |

| File | A regular |

| Member file | A file that is part of an existing ZIP file |

Having these terms clear in your mind will help you avoid confusion while you read through the upcoming sections. Now you’re ready to continue learning how to manipulate ZIP files efficiently in your Python code!

You’ve probably already encountered and worked with ZIP files. Yes, those with the .zip file extension are everywhere! ZIP files, also known as ZIP archives , are files that use the ZIP file format .

PKWARE is the company that created and first implemented this file format. The company put together and maintains the current format specification , which is publicly available and allows the creation of products, programs, and processes that read and write files using the ZIP file format.

The ZIP file format is a cross-platform, interoperable file storage and transfer format. It combines lossless data compression , file management, and data encryption .

Data compression isn’t a requirement for an archive to be considered a ZIP file. So you can have compressed or uncompressed member files in your ZIP archives. The ZIP file format supports several compression algorithms, though Deflate is the most common. The format also supports information integrity checks with CRC32 .

Even though there are other similar archiving formats, such as RAR and TAR files, the ZIP file format has quickly become a common standard for efficient data storage and for data exchange over computer networks.

ZIP files are everywhere. For example, office suites such as Microsoft Office and Libre Office rely on the ZIP file format as their document container file . This means that .docx , .xlsx , .pptx , .odt , .ods , and .odp files are actually ZIP archives containing several files and folders that make up each document. Other common files that use the ZIP format include .jar , .war , and .epub files.

You may be familiar with GitHub , which provides web hosting for software development and version control using Git . GitHub uses ZIP files to package software projects when you download them to your local computer. For example, you can download the exercise solutions for Python Basics: A Practical Introduction to Python 3 book in a ZIP file, or you can download any other project of your choice.

ZIP files allow you to aggregate, compress, and encrypt files into a single interoperable and portable container. You can stream ZIP files, split them into segments, make them self-extracting, and more.

Knowing how to create, read, write, and extract ZIP files can be a useful skill for developers and professionals who work with computers and digital information. Among other benefits, ZIP files allow you to:

- Reduce the size of files and their storage requirements without losing information

- Improve transfer speed over the network due to reduced size and single-file transfer

- Pack several related files together into a single archive for efficient management

- Bundle your code into a single archive for distribution purposes

- Secure your data by using encryption , which is a common requirement nowadays

- Guarantee the integrity of your information to avoid accidental and malicious changes to your data

These features make ZIP files a useful addition to your Python toolbox if you’re looking for a flexible, portable, and reliable way to archive your digital files.

Yes! Python has several tools that allow you to manipulate ZIP files. Some of these tools are available in the Python standard library . They include low-level libraries for compressing and decompressing data using specific compression algorithms, such as zlib , bz2 , lzma , and others .

Python also provides a high-level module called zipfile specifically designed to create, read, write, extract, and list the content of ZIP files. In this tutorial, you’ll learn about Python’s zipfile and how to use it effectively.

Manipulating Existing ZIP Files With Python’s zipfile

Python’s zipfile provides convenient classes and functions that allow you to create, read, write, extract, and list the content of your ZIP files. Here are some additional features that zipfile supports:

- ZIP files greater than 4 GiB ( ZIP64 files )

- Data decryption

- Several compression algorithms, such as Deflate, Bzip2 , and LZMA

- Information integrity checks with CRC32

Be aware that zipfile does have a few limitations. For example, the current data decryption feature can be pretty slow because it uses pure Python code. The module can’t handle the creation of encrypted ZIP files. Finally, the use of multi-disk ZIP files isn’t supported either. Despite these limitations, zipfile is still a great and useful tool. Keep reading to explore its capabilities.

In the zipfile module, you’ll find the ZipFile class. This class works pretty much like Python’s built-in open() function, allowing you to open your ZIP files using different modes. The read mode ( "r" ) is the default. You can also use the write ( "w" ), append ( "a" ), and exclusive ( "x" ) modes. You’ll learn more about each of these in a moment.

ZipFile implements the context manager protocol so that you can use the class in a with statement . This feature allows you to quickly open and work with a ZIP file without worrying about closing the file after you finish your work.

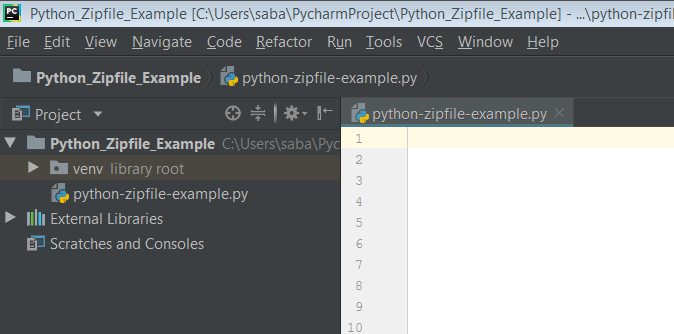

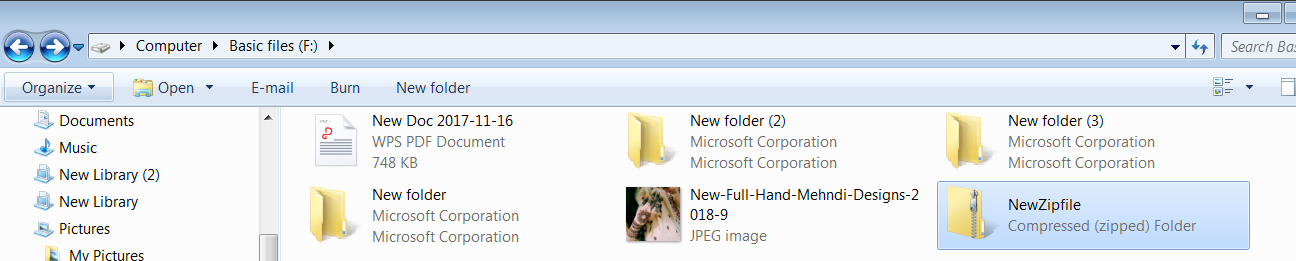

Before writing any code, make sure you have a copy of the files and archives that you’ll be using:

To get your working environment ready, place the downloaded resources into a directory called python-zipfile/ in your home folder. Once you have the files in the right place, move to the newly created directory and fire up a Python interactive session there.

To warm up, you’ll start by reading the ZIP file called sample.zip . To do that, you can use ZipFile in reading mode:

The first argument to the initializer of ZipFile can be a string representing the path to the ZIP file that you need to open. This argument can accept file-like and path-like objects too. In this example, you use a string-based path.

The second argument to ZipFile is a single-letter string representing the mode that you’ll use to open the file. As you learned at the beginning of this section, ZipFile can accept four possible modes, depending on your needs. The mode positional argument defaults to "r" , so you can get rid of it if you want to open the archive for reading only.

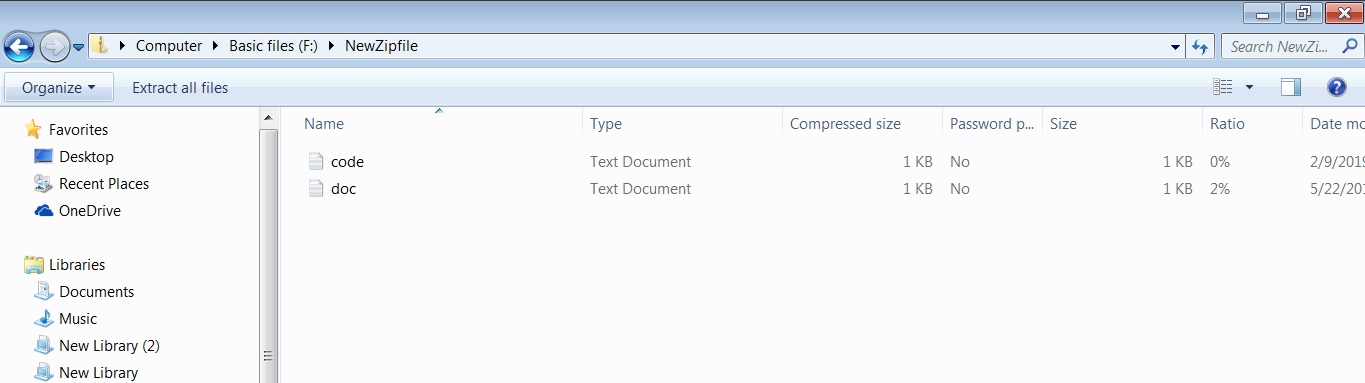

Inside the with statement, you call .printdir() on archive . The archive variable now holds the instance of ZipFile itself. This function provides a quick way to display the content of the underlying ZIP file on your screen. The function’s output has a user-friendly tabular format with three informative columns:

If you want to make sure that you’re targeting a valid ZIP file before you try to open it, then you can wrap ZipFile in a try … except statement and catch any BadZipFile exception:

The first example successfully opens sample.zip without raising a BadZipFile exception. That’s because sample.zip has a valid ZIP format. On the other hand, the second example doesn’t succeed in opening bad_sample.zip , because the file is not a valid ZIP file.

To check for a valid ZIP file, you can also use the is_zipfile() function:

In these examples, you use a conditional statement with is_zipfile() as a condition. This function takes a filename argument that holds the path to a ZIP file in your file system. This argument can accept string, file-like, or path-like objects. The function returns True if filename is a valid ZIP file. Otherwise, it returns False .

Now say you want to add hello.txt to a hello.zip archive using ZipFile . To do that, you can use the write mode ( "w" ). This mode opens a ZIP file for writing. If the target ZIP file exists, then the "w" mode truncates it and writes any new content you pass in.

Note: If you’re using ZipFile with existing files, then you should be careful with the "w" mode. You can truncate your ZIP file and lose all the original content.

If the target ZIP file doesn’t exist, then ZipFile creates it for you when you close the archive:

After running this code, you’ll have a hello.zip file in your python-zipfile/ directory. If you list the file content using .printdir() , then you’ll notice that hello.txt will be there. In this example, you call .write() on the ZipFile object. This method allows you to write member files into your ZIP archives. Note that the argument to .write() should be an existing file.

Note: ZipFile is smart enough to create a new archive when you use the class in writing mode and the target archive doesn’t exist. However, the class doesn’t create new directories in the path to the target ZIP file if those directories don’t already exist.

That explains why the following code won’t work:

Because the missing/ directory in the path to the target hello.zip file doesn’t exist, you get a FileNotFoundError exception.

The append mode ( "a" ) allows you to append new member files to an existing ZIP file. This mode doesn’t truncate the archive, so its original content is safe. If the target ZIP file doesn’t exist, then the "a" mode creates a new one for you and then appends any input files that you pass as an argument to .write() .

To try out the "a" mode, go ahead and add the new_hello.txt file to your newly created hello.zip archive:

Here, you use the append mode to add new_hello.txt to the hello.zip file. Then you run .printdir() to confirm that the new file is present in the ZIP file.

ZipFile also supports an exclusive mode ( "x" ). This mode allows you to exclusively create new ZIP files and write new member files into them. You’ll use the exclusive mode when you want to make a new ZIP file without overwriting an existing one. If the target file already exists, then you get FileExistsError .

Finally, if you create a ZIP file using the "w" , "a" , or "x" mode and then close the archive without adding any member files, then ZipFile creates an empty archive with the appropriate ZIP format.

You’ve already put .printdir() into action. It’s a useful method that you can use to list the content of your ZIP files quickly. Along with .printdir() , the ZipFile class provides several handy methods for extracting metadata from existing ZIP files.

Here’s a summary of those methods:

| Method | Description |

|---|---|

| Returns a object with information about the member file provided by . Note that must hold the path to the target file inside the underlying ZIP file. | |

| Returns a of objects, one per member file. | |

| Returns a list holding the names of all the member files in the underlying archive. The names in this list are valid arguments to . |

With these three tools, you can retrieve a lot of useful information about the content of your ZIP files. For example, take a look at the following example, which uses .getinfo() :

As you learned in the table above, .getinfo() takes a member file as an argument and returns a ZipInfo object with information about it.

Note: ZipInfo isn’t intended to be instantiated directly. The .getinfo() and .infolist() methods return ZipInfo objects automatically when you call them. However, ZipInfo includes a class method called .from_file() , which allows you to instantiate the class explicitly if you ever need to do it.

ZipInfo objects have several attributes that allow you to retrieve valuable information about the target member file. For example, .file_size and .compress_size hold the size, in bytes, of the original and compressed files, respectively. The class also has some other useful attributes, such as .filename and .date_time , which return the filename and the last modification date.

Note: By default, ZipFile doesn’t compress the input files to add them to the final archive. That’s why the size and the compressed size are the same in the above example. You’ll learn more about this topic in the Compressing Files and Directories section below.

With .infolist() , you can extract information from all the files in a given archive. Here’s an example that uses this method to generate a minimal report with information about all the member files in your sample.zip archive:

The for loop iterates over the ZipInfo objects from .infolist() , retrieving the filename, the last modification date, the normal size, and the compressed size of each member file. In this example, you’ve used datetime to format the date in a human-readable way.

Note: The example above was adapted from zipfile — ZIP Archive Access .

If you just need to perform a quick check on a ZIP file and list the names of its member files, then you can use .namelist() :

Because the filenames in this output are valid arguments to .getinfo() , you can combine these two methods to retrieve information about selected member files only.

For example, you may have a ZIP file containing different types of member files ( .docx , .xlsx , .txt , and so on). Instead of getting the complete information with .infolist() , you just need to get the information about the .docx files. Then you can filter the files by their extension and call .getinfo() on your .docx files only. Go ahead and give it a try!

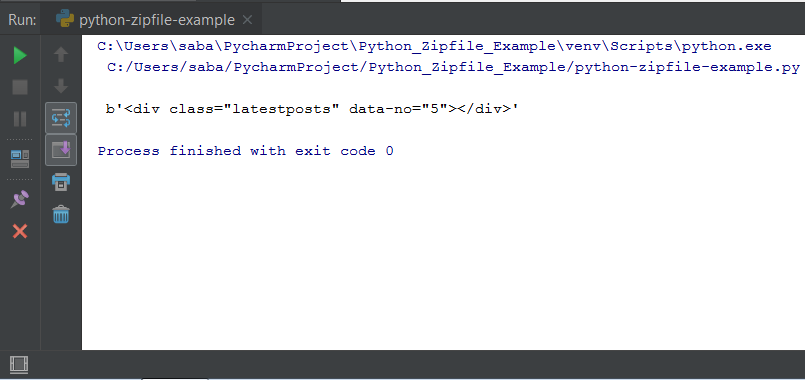

Sometimes you have a ZIP file and need to read the content of a given member file without extracting it. To do that, you can use .read() . This method takes a member file’s name and returns that file’s content as bytes :

To use .read() , you need to open the ZIP file for reading or appending. Note that .read() returns the content of the target file as a stream of bytes. In this example, you use .split() to split the stream into lines, using the line feed character "\n" as a separator. Because .split() is operating on a byte object, you need to add a leading b to the string used as an argument.

ZipFile.read() also accepts a second positional argument called pwd . This argument allows you to provide a password for reading encrypted files. To try this feature, you can rely on the sample_pwd.zip file that you downloaded with the material for this tutorial:

In the first example, you provide the password secret to read your encrypted file. The pwd argument accepts values of the bytes type. If you use .read() on an encrypted file without providing the required password, then you get a RuntimeError , as you can note in the second example.

Note: Python’s zipfile supports decryption. However, it doesn’t support the creation of encrypted ZIP files. That’s why you would need to use an external file archiver to encrypt your files.

Some popular file archivers include 7z and WinRAR for Windows, Ark and GNOME Archive Manager for Linux, and Archiver for macOS.

For large encrypted ZIP files, keep in mind that the decryption operation can be extremely slow because it’s implemented in pure Python. In such cases, consider using a specialized program to handle your archives instead of using zipfile .

If you regularly work with encrypted files, then you may want to avoid providing the decryption password every time you call .read() or another method that accepts a pwd argument. If that’s the case, you can use ZipFile.setpassword() to set a global password:

With .setpassword() , you just need to provide your password once. ZipFile uses that unique password for decrypting all the member files.

In contrast, if you have ZIP files with different passwords for individual member files, then you need to provide the specific password for each file using the pwd argument of .read() :

In this example, you use secret1 as a password to read hello.txt and secret2 to read lorem.md . A final detail to consider is that when you use the pwd argument, you’re overriding whatever archive-level password you may have set with .setpassword() .

Note: Calling .read() on a ZIP file that uses an unsupported compression method raises a NotImplementedError . You also get an error if the required compression module isn’t available in your Python installation.

If you’re looking for a more flexible way to read from member files and create and add new member files to an archive, then ZipFile.open() is for you. Like the built-in open() function, this method implements the context manager protocol, and therefore it supports the with statement:

In this example, you open hello.txt for reading. The first argument to .open() is name , indicating the member file that you want to open. The second argument is the mode, which defaults to "r" as usual. ZipFile.open() also accepts a pwd argument for opening encrypted files. This argument works the same as the equivalent pwd argument in .read() .

You can also use .open() with the "w" mode. This mode allows you to create a new member file, write content to it, and finally append the file to the underlying archive, which you should open in append mode:

In the first code snippet, you open sample.zip in append mode ( "a" ). Then you create new_hello.txt by calling .open() with the "w" mode. This function returns a file-like object that supports .write() , which allows you to write bytes into the newly created file.

Note: You need to supply a non-existing filename to .open() . If you use a filename that already exists in the underlying archive, then you’ll end up with a duplicated file and a UserWarning exception.

In this example, you write b'Hello, World!' into new_hello.txt . When the execution flow exits the inner with statement, Python writes the input bytes to the member file. When the outer with statement exits, Python writes new_hello.txt to the underlying ZIP file, sample.zip .

The second code snippet confirms that new_hello.txt is now a member file of sample.zip . A detail to notice in the output of this example is that .write() sets the Modified date of the newly added file to 1980-01-01 00:00:00 , which is a weird behavior that you should keep in mind when using this method.

As you learned in the above section, you can use the .read() and .write() methods to read from and write to member files without extracting them from the containing ZIP archive. Both of these methods work exclusively with bytes.

However, when you have a ZIP archive containing text files, you may want to read their content as text instead of as bytes. There are at least two way to do this. You can use:

- bytes.decode()

- io.TextIOWrapper

Because ZipFile.read() returns the content of the target member file as bytes, .decode() can operate on these bytes directly. The .decode() method decodes a bytes object into a string using a given character encoding format.

Here’s how you can use .decode() to read text from the hello.txt file in your sample.zip archive:

In this example, you read the content of hello.txt as bytes. Then you call .decode() to decode the bytes into a string using UTF-8 as encoding . To set the encoding argument, you use the "utf-8" string. However, you can use any other valid encoding, such as UTF-16 or cp1252 , which can be represented as case-insensitive strings. Note that "utf-8" is the default value of the encoding argument to .decode() .

It’s important to keep in mind that you need to know beforehand the character encoding format of any member file that you want to process using .decode() . If you use the wrong character encoding, then your code will fail to correctly decode the underlying bytes into text, and you can end up with a ton of indecipherable characters.

The second option for reading text out of a member file is to use an io.TextIOWrapper object, which provides a buffered text stream. This time you need to use .open() instead of .read() . Here’s an example of using io.TextIOWrapper to read the content of the hello.txt member file as a stream of text:

In the inner with statement in this example, you open the hello.txt member file from your sample.zip archive. Then you pass the resulting binary file-like object, hello , as an argument to io.TextIOWrapper . This creates a buffered text stream by decoding the content of hello using the UTF-8 character encoding format. As a result, you get a stream of text directly from your target member file.

Just like with .encode() , the io.TextIOWrapper class takes an encoding argument. You should always specify a value for this argument because the default text encoding depends on the system running the code and may not be the right value for the file that you’re trying to decode.

Extracting the content of a given archive is one of the most common operations that you’ll do on ZIP files. Depending on your needs, you may want to extract a single file at a time or all the files in one go.

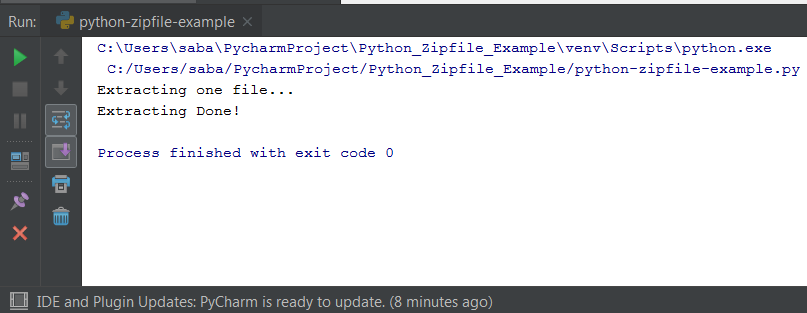

ZipFile.extract() allows you to accomplish the first task. This method takes the name of a member file and extracts it to a given directory signaled by path . The destination path defaults to the current directory:

Now new_hello.txt will be in your output_dir/ directory. If the target filename already exists in the output directory, then .extract() overwrites it without asking for confirmation. If the output directory doesn’t exist, then .extract() creates it for you. Note that .extract() returns the path to the extracted file.

The name of the member file must be the file’s full name as returned by .namelist() . It can also be a ZipInfo object containing the file’s information.

You can also use .extract() with encrypted files. In that case, you need to provide the required pwd argument or set the archive-level password with .setpassword() .

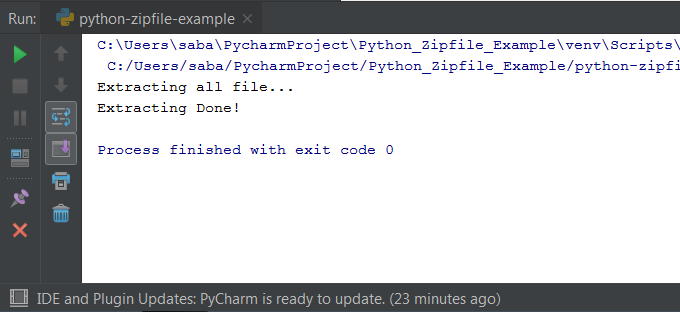

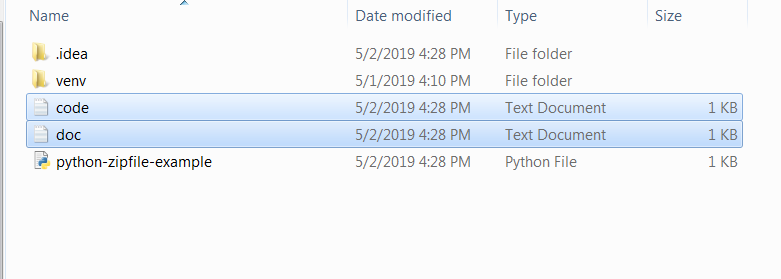

When it comes to extracting all the member files from an archive, you can use .extractall() . As its name implies, this method extracts all the member files to a destination path, which is the current directory by default:

After running this code, all the current content of sample.zip will be in your output_dir/ directory. If you pass a non-existing directory to .extractall() , then this method automatically creates the directory. Finally, if any of the member files already exist in the destination directory, then .extractall() will overwrite them without asking for your confirmation, so be careful.

If you only need to extract some of the member files from a given archive, then you can use the members argument. This argument accepts a list of member files, which should be a subset of the whole list of files in the archive at hand. Finally, just like .extract() , the .extractall() method also accepts a pwd argument to extract encrypted files.

Sometimes, it’s convenient for you to open a given ZIP file without using a with statement. In those cases, you need to manually close the archive after use to complete any writing operations and to free the acquired resources.

To do that, you can call .close() on your ZipFile object:

The call to .close() closes archive for you. You must call .close() before exiting your program. Otherwise, some writing operations might not be executed. For example, if you open a ZIP file for appending ( "a" ) new member files, then you need to close the archive to write the files.

Creating, Populating, and Extracting Your Own ZIP Files

So far, you’ve learned how to work with existing ZIP files. You’ve learned to read, write, and append member files to them by using the different modes of ZipFile . You’ve also learned how to read relevant metadata and how to extract the content of a given ZIP file.

In this section, you’ll code a few practical examples that’ll help you learn how to create ZIP files from several input files and from an entire directory using zipfile and other Python tools. You’ll also learn how to use zipfile for file compression and more.

Sometimes you need to create a ZIP archive from several related files. This way, you can have all the files in a single container for distributing them over a computer network or sharing them with friends or colleagues. To this end, you can create a list of target files and write them into an archive using ZipFile and a loop:

Here, you create a ZipFile object with the desired archive name as its first argument. The "w" mode allows you to write member files into the final ZIP file.

The for loop iterates over your list of input files and writes them into the underlying ZIP file using .write() . Once the execution flow exits the with statement, ZipFile automatically closes the archive, saving the changes for you. Now you have a multiple_files.zip archive containing all the files from your original list of files.

Bundling the content of a directory into a single archive is another everyday use case for ZIP files. Python has several tools that you can use with zipfile to approach this task. For example, you can use pathlib to read the content of a given directory . With that information, you can create a container archive using ZipFile .

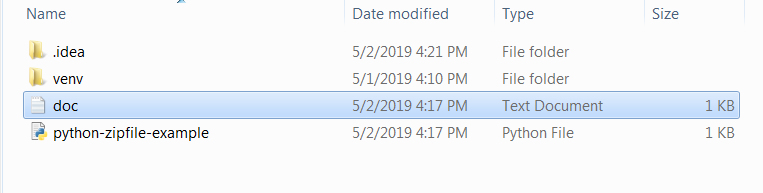

In the python-zipfile/ directory, you have a subdirectory called source_dir/ , with the following content:

In source_dir/ , you only have three regular files. Because the directory doesn’t contain subdirectories, you can use pathlib.Path.iterdir() to iterate over its content directly. With this idea in mind, here’s how you can build a ZIP file from the content of source_dir/ :

In this example, you create a pathlib.Path object from your source directory. The first with statement creates a ZipFile object ready for writing. Then the call to .iterdir() returns an iterator over the entries in the underlying directory.

Because you don’t have any subdirectories in source_dir/ , the .iterdir() function yields only files. The for loop iterates over the files and writes them into the archive.

In this case, you pass file_path.name to the second argument of .write() . This argument is called arcname and holds the name of the member file inside the resulting archive. All the examples that you’ve seen so far rely on the default value of arcname , which is the same filename you pass as the first argument to .write() .

If you don’t pass file_path.name to arcname , then your source directory will be at the root of your ZIP file, which can also be a valid result depending on your needs.

Now check out the root_dir/ folder in your working directory. In this case, you’ll find the following structure:

You have the usual files and a subdirectory with a single file in it. If you want to create a ZIP file with this same internal structure, then you need a tool that recursively iterates through the directory tree under root_dir/ .

Here’s how to zip a complete directory tree, like the one above, using zipfile along with Path.rglob() from the pathlib module:

In this example, you use Path.rglob() to recursively traverse the directory tree under root_dir/ . Then you write every file and subdirectory to the target ZIP archive.

This time, you use Path.relative_to() to get the relative path to each file and then pass the result to the second argument of .write() . This way, the resulting ZIP file ends up with the same internal structure as your original source directory. Again, you can get rid of this argument if you want your source directory to be at the root of your ZIP file.

If your files are taking up too much disk space, then you might consider compressing them. Python’s zipfile supports a few popular compression methods. However, the module doesn’t compress your files by default. If you want to make your files smaller, then you need to explicitly supply a compression method to ZipFile .

Typically, you’ll use the term stored to refer to member files written into a ZIP file without compression. That’s why the default compression method of ZipFile is called ZIP_STORED , which actually refers to uncompressed member files that are simply stored in the containing archive.

The compression method is the third argument to the initializer of ZipFile . If you want to compress your files while you write them into a ZIP archive, then you can set this argument to one of the following constants:

| Constant | Compression Method | Required Module |

|---|---|---|

| Deflate | ||

| Bzip2 | ||

| LZMA |

These are the compression methods that you can currently use with ZipFile . A different method will raise a NotImplementedError . There are no additional compression methods available to zipfile as of Python 3.10.

As an additional requirement, if you choose one of these methods, then the compression module that supports it must be available in your Python installation. Otherwise, you’ll get a RuntimeError exception, and your code will break.

Another relevant argument of ZipFile when it comes to compressing your files is compresslevel . This argument controls which compression level you use.

With the Deflate method, compresslevel can take integer numbers from 0 through 9 . With the Bzip2 method, you can pass integers from 1 through 9 . In both cases, when the compression level increases, you get higher compression and lower compression speed.

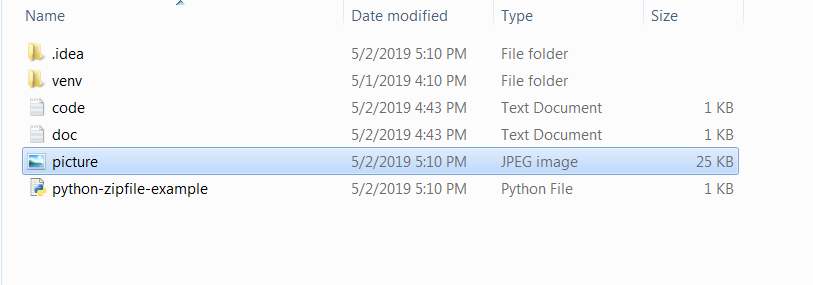

Note: Binary files, such as PNG, JPG, MP3, and the like, already use some kind of compression. As a result, adding them to a ZIP file may not make the data any smaller, because it’s already compressed to some level.

Now say you want to archive and compress the content of a given directory using the Deflate method, which is the most commonly used method in ZIP files. To do that, you can run the following code:

In this example, you pass 9 to compresslevel to get maximum compression. To provide this argument, you use a keyword argument . You need to do this because compresslevel isn’t the fourth positional argument to the ZipFile initializer.

Note: The initializer of ZipFile takes a fourth argument called allowZip64 . It’s a Boolean argument that tells ZipFile to create ZIP files with the .zip64 extension for files larger than 4 GB.

After running this code, you’ll have a comp_dir.zip file in your current directory. If you compare the size of that file with the size of your original sample.zip file, then you’ll note a significant size reduction.

Creating ZIP files sequentially can be another common requirement in your day-to-day programming. For example, you may need to create an initial ZIP file with or without content and then append new member files as soon as they become available. In this situation, you need to open and close the target ZIP file multiple times.

To solve this problem, you can use ZipFile in append mode ( "a" ), as you have already done. This mode allows you to safely append new member files to a ZIP archive without truncating its current content:

In this example, append_member() is a function that appends a file ( member ) to the input ZIP archive ( zip_file ). To perform this action, the function opens and closes the target archive every time you call it. Using a function to perform this task allows you to reuse the code as many times as you need.

The get_file_from_stream() function is a generator function simulating a stream of files to process. Meanwhile, the for loop sequentially adds member files to incremental.zip using append_member() . If you check your working directory after running this code, then you’ll find an incremental.zip archive containing the three files that you passed into the loop.

One of the most common operations you’ll ever perform on ZIP files is to extract their content to a given directory in your file system. You already learned the basics of using .extract() and .extractall() to extract one or all the files from an archive.

As an additional example, get back to your sample.zip file. At this point, the archive contains four files of different types. You have two .txt files and two .md files. Now say you want to extract only the .md files. To do so, you can run the following code:

The with statement opens sample.zip for reading. The loop iterates over each file in the archive using namelist() , while the conditional statement checks if the filename ends with the .md extension. If it does, then you extract the file at hand to a target directory, output_dir/ , using .extract() .

Exploring Additional Classes From zipfile

So far, you’ve learned about ZipFile and ZipInfo , which are two of the classes available in zipfile . This module also provides two more classes that can be handy in some situations. Those classes are zipfile.Path and zipfile.PyZipFile . In the following two sections, you’ll learn the basics of these classes and their main features.

When you open a ZIP file with your favorite archiver application, you see the archive’s internal structure. You may have files at the root of the archive. You may also have subdirectories with more files. The archive looks like a normal directory on your file system, with each file located at a specific path.

The zipfile.Path class allows you to construct path objects to quickly create and manage paths to member files and directories inside a given ZIP file. The class takes two arguments:

- root accepts a ZIP file, either as a ZipFile object or a string-based path to a physical ZIP file.

- at holds the location of a specific member file or directory inside the archive. It defaults to the empty string, representing the root of the archive.

With your old friend sample.zip as the target, run the following code:

This code shows that zipfile.Path implements several features that are common to a pathlib.Path object. You can get the name of the file with .name . You can check if the path points to a regular file with .is_file() . You can check if a given file exists inside a particular ZIP file, and more.

Path also provides an .open() method to open a member file using different modes. For example, the code below opens hello.txt for reading:

With Path , you can quickly create a path object pointing to a specific member file in a given ZIP file and access its content immediately using .open() .

Just like with a pathlib.Path object, you can list the content of a ZIP file by calling .iterdir() on a zipfile.Path object:

It’s clear that zipfile.Path provides many useful features that you can use to manage member files in your ZIP archives in almost no time.

Another useful class in zipfile is PyZipFile . This class is pretty similar to ZipFile , and it’s especially handy when you need to bundle Python modules and packages into ZIP files. The main difference from ZipFile is that the initializer of PyZipFile takes an optional argument called optimize , which allows you to optimize the Python code by compiling it to bytecode before archiving it.

PyZipFile provides the same interface as ZipFile , with the addition of .writepy() . This method can take a Python file ( .py ) as an argument and add it to the underlying ZIP file. If optimize is -1 (the default), then the .py file is automatically compiled to a .pyc file and then added to the target archive. Why does this happen?

Since version 2.3, the Python interpreter has supported importing Python code from ZIP files , a capability known as Zip imports . This feature is quite convenient. It allows you to create importable ZIP files to distribute your modules and packages as a single archive.

Note: You can also use the ZIP file format to create and distribute Python executable applications, which are commonly known as Python Zip applications. To learn how to create them, check out Python’s zipapp: Build Executable Zip Applications .

PyZipFile is helpful when you need to generate importable ZIP files. Packaging the .pyc file rather than the .py file makes the importing process way more efficient because it skips the compilation step.

Inside the python-zipfile/ directory, you have a hello.py module with the following content:

This code defines a function called greet() , which takes name as an argument and prints a greeting message to the screen. Now say you want to package this module into a ZIP file for distribution purposes. To do that, you can run the following code:

In this example, the call to .writepy() automatically compiles hello.py to hello.pyc and stores it in hello.zip . This becomes clear when you list the archive’s content using .printdir() .

Once you have hello.py bundled into a ZIP file, then you can use Python’s import system to import this module from its containing archive:

The first step to import code from a ZIP file is to make that file available in sys.path . This variable holds a list of strings that specifies Python’s search path for modules. To add a new item to sys.path , you can use .insert() .

For this example to work, you need to change the placeholder path and pass the path to hello.zip on your file system. Once your importable ZIP file is in this list, then you can import your code just like you’d do with a regular module.

Finally, consider the hello/ subdirectory in your working folder. It contains a small Python package with the following structure:

The __init__.py module turns the hello/ directory into a Python package. The hello.py module is the same one that you used in the example above. Now suppose you want to bundle this package into a ZIP file. If that’s the case, then you can do the following:

The call to .writepy() takes the hello package as an argument, searches for .py files inside it, compiles them to .pyc files, and finally adds them to the target ZIP file, hello.zip . Again, you can import your code from this archive by following the steps that you learned before:

Because your code is in a package now, you first need to import the hello module from the hello package. Then you can access your greet() function normally.

Python’s zipfile also offers a minimal command-line interface that allows you to access the module’s main functionality quickly. For example, you can use the -l or --list option to list the content of an existing ZIP file:

This command shows the same output as an equivalent call to .printdir() on the sample.zip archive.

Now say you want to create a new ZIP file containing several input files. In that case, you can use the -c or --create option:

This command creates a new_sample.zip file containing your hello.txt , lorem.md , realpython.md files.

What if you need to create a ZIP file to archive an entire directory? For example, you may have your own source_dir/ with the same three files as the example above. You can create a ZIP file from that directory by using the following command:

With this command, zipfile places source_dir/ at the root of the resulting source_dir.zip file. As usual, you can list the archive content by running zipfile with the -l option.

Note: When you use zipfile to create an archive from your command line, the library implicitly uses the Deflate compression algorithm when archiving your files.

You can also extract all the content of a given ZIP file using the -e or --extract option from your command line:

After running this command, you’ll have a new sample/ folder in your working directory. The new folder will contain the current files in your sample.zip archive.

The final option that you can use with zipfile from the command line is -t or --test . This option allows you to test if a given file is a valid ZIP file. Go ahead and give it a try!

There are a few other tools in the Python standard library that you can use to archive, compress, and decompress your files at a lower level. Python’s zipfile uses some of these internally, mainly for compression purposes. Here’s a summary of some of these tools:

| Module | Description |

|---|---|

| Allows compression and decompression using the library | |

| Provides an interface for compressing and decompressing data using the Bzip2 compression algorithm | |

| Provides classes and functions for compressing and decompressing data using the LZMA compression algorithm |

Unlike zipfile , some of these modules allow you to compress and decompress data from memory and data streams other than regular files and archives.

In the Python standard library, you’ll also find tarfile , which supports the TAR archiving format. There’s also a module called gzip , which provides an interface to compress and decompress data, similar to how the GNU Gzip program does it.

For example, you can use gzip to create a compressed file containing some text:

Once you run this code, you’ll have a hello.txt.gz archive containing a compressed version of hello.txt in your current directory. Inside hello.txt , you’ll find the text Hello, World! .

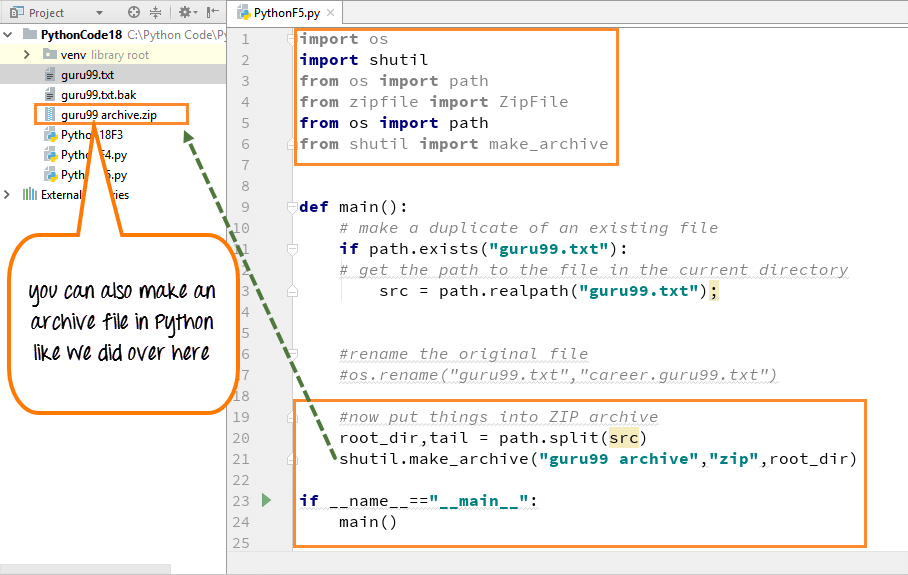

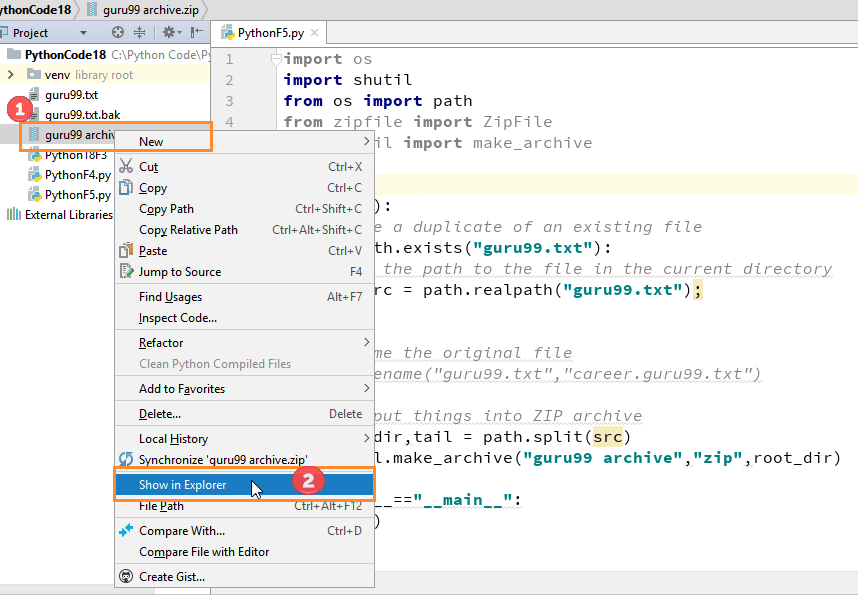

A quick and high-level way to create a ZIP file without using zipfile is to use shutil . This module allows you to perform several high-level operations on files and collections of files. When it comes to archiving operations , you have make_archive() , which can create archives, such as ZIP or TAR files:

This code creates a compressed file called sample.zip in your working directory. This ZIP file will contain all the files in the input directory, source_dir/ . The make_archive() function is convenient when you need a quick and high-level way to create your ZIP files in Python.

Python’s zipfile is a handy tool when you need to read, write, compress, decompress, and extract files from ZIP archives . The ZIP file format has become an industry standard, allowing you to package and optionally compress your digital data.

The benefits of using ZIP files include archiving related files together, saving disk space, making it easy to transfer data over computer networks, bundling Python code for distribution purposes, and more.

In this tutorial, you learned how to:

- Use Python’s zipfile to read, write, and extract existing ZIP files

- Read metadata about the content of your ZIP files with zipfile

- Create your own ZIP files to archive and compress your digital data

You also learned how to use zipfile from your command line to list, create, and extract your ZIP files. With this knowledge, you’re ready to efficiently archive, compress, and manipulate your digital data using the ZIP file format.

🐍 Python Tricks 💌

Get a short & sweet Python Trick delivered to your inbox every couple of days. No spam ever. Unsubscribe any time. Curated by the Real Python team.

About Leodanis Pozo Ramos

Leodanis is an industrial engineer who loves Python and software development. He's a self-taught Python developer with 6+ years of experience. He's an avid technical writer with a growing number of articles published on Real Python and other sites.

Each tutorial at Real Python is created by a team of developers so that it meets our high quality standards. The team members who worked on this tutorial are:

Master Real-World Python Skills With Unlimited Access to Real Python

Join us and get access to thousands of tutorials, hands-on video courses, and a community of expert Pythonistas:

Join us and get access to thousands of tutorials, hands-on video courses, and a community of expert Pythonistas:

What Do You Think?

What’s your #1 takeaway or favorite thing you learned? How are you going to put your newfound skills to use? Leave a comment below and let us know.

Commenting Tips: The most useful comments are those written with the goal of learning from or helping out other students. Get tips for asking good questions and get answers to common questions in our support portal . Looking for a real-time conversation? Visit the Real Python Community Chat or join the next “Office Hours” Live Q&A Session . Happy Pythoning!

Keep Learning

Related Topics: intermediate python

Recommended Video Course: Manipulating ZIP Files With Python

Keep reading Real Python by creating a free account or signing in:

Already have an account? Sign-In

Almost there! Complete this form and click the button below to gain instant access:

Python's zipfile: Manipulate Your ZIP Files Efficiently (Materials)

🔒 No spam. We take your privacy seriously.

Creating a Zip Archive of a Directory in Python

- Introduction

When dealing with large amounts of data or files, you might find yourself needing to compress files into a more manageable format. One of the best ways to do this is by creating a zip archive.

In this article, we'll be exploring how you can create a zip archive of a directory using Python. Whether you're looking to save space, simplify file sharing, or just keep things organized, Python's zipfile module provides a to do this.

- Creating a Zip Archive with Python

Python's standard library comes with a module named zipfile that provides methods for creating, reading, writing, appending, and listing contents of a ZIP file. This module is useful for creating a zip archive of a directory. We'll start by importing the zipfile and os modules:

Now, let's create a function that will zip a directory:

In this function, we first open a new zip file in write mode. Then, we walk through the directory we want to zip. For each file in the directory, we use the write() method to add it to the zip file. The os.path.relpath() function is used so that we store the relative path of the file in the zip file, instead of the absolute path.

Let's test our function:

After running this code, you should see a new file named archive.zip in your current directory. This zip file contains all the files from test_directory .

Note: Be careful when specifying the paths. If the zip_path file already exists, it will be overwritten.

Python's zipfile module makes it easy to create a zip archive of a directory. With just a few lines of code, you can compress and organize your files.

In the following sections, we'll dive deeper into handling nested directories, large directories, and error handling. This may seem a bit backwards, but the above function is likely what most people came here for, so I wanted to show it first.

- Using the zipfile Module

In Python, the zipfile module is the best tool for working with zip archives. It provides functions to read, write, append, and extract data from zip files. The module is part of Python's standard library, so there's no need to install anything extra.

Here's a simple example of how you can create a new zip file and add a file to it:

In this code, we first import the zipfile module. Then, we create a new zip file named 'example.zip' in write mode ('w'). We add a file named 'test.txt' to the zip file using the write() method. Finally, we close the zip file using the close() method.

- Creating a Zip Archive of a Directory

Creating a zip archive of a directory involves a bit more work, but it's still fairly easy with the zipfile module. You need to walk through the directory structure, adding each file to the zip archive.

We first define a function zip_directory() that takes a folder path and a ZipFile object. It uses the os.walk() function to iterate over all files in the directory and its subdirectories. For each file, it constructs the full file path and adds the file to the zip archive.

The os.walk() function is a convenient way to traverse directories. It generates the file names in a directory tree by walking the tree either top-down or bottom-up.

Note: Be careful with the file paths when adding files to the zip archive. The write() method adds files to the archive with the exact path you provide. If you provide an absolute path, the file will be added with the full absolute path in the zip archive. This is usually not what you want. Instead, you typically want to add files with a relative path to the directory you're zipping.

In the main part of the script, we create a new zip file, call the zip_directory() function to add the directory to the zip file, and finally close the zip file.

- Working with Nested Directories

When working with nested directories, the process of creating a zip archive is a bit more complicated. The first function we showed in this article actually handles this case as well, which we'll show again here:

The main difference is that we're actually creating the zip directory outside of the function and pass it as a parameter. Whether you do it within the function itself or not is up to personal preference.

- Handling Large Directories

So what if we're dealing with a large directory? Zipping a large directory can consume a lot of memory and even crash your program if you don't take the right precautions.

Luckily, the zipfile module allows us to create a zip archive without loading all files into memory at once. By using the with statement, we can ensure that each file is closed and its memory freed after it's added to the archive.

Check out our hands-on, practical guide to learning Git, with best-practices, industry-accepted standards, and included cheat sheet. Stop Googling Git commands and actually learn it!

In this version, we're using the with statement when opening each file and when creating the zip archive. This will guarantee that each file is closed after it's read, freeing up the memory it was using. This way, we can safely zip large directories without running into memory issues.

- Error Handling in zipfile

When working with zipfile in Python, we need to remember to handle exceptions so our program doesn't crash unexpectedly. The most common exceptions you might encounter are RuntimeError , ValueError , and FileNotFoundError .

Let's take a look at how we can handle these exceptions while creating a zip file:

FileNotFoundError is raised when the file we're trying to zip doesn't exist. RuntimeError is a general exception that might be raised for a number of reasons, so we print the exception message to understand what went wrong. zipfile.LargeZipFile is raised when the file we're trying to compress is too big.

Note: Python's zipfile module raises a LargeZipFile error when the file you're trying to compress is larger than 2 GB. If you're working with large files, you can prevent this error by calling ZipFile with the allowZip64=True argument.

- Common Errors and Solutions

While working with the zipfile module, you might encounter several common errors. Let's explore some of these errors and their solutions:

FileNotFoundError

This error happens when the file or directory you're trying to zip does not exist. So always check if the file or directory exists before attempting to compress it.

IsADirectoryError

This error is raised when you're trying to write a directory to a zip file using ZipFile.write() . To avoid this, use os.walk() to traverse the directory and write the individual files instead.

PermissionError

As you probably guessed, this error happens when you don't have the necessary permissions to read the file or write to the directory. Make sure you have the correct permissions before trying to manipulate files or directories.

LargeZipFile

As mentioned earlier, this error is raised when the file you're trying to compress is larger than 2 GB. To prevent this error, call ZipFile with the allowZip64=True argument.

In this snippet, we're using the allowZip64=True argument to allow zipping files larger than 2 GB.

- Compressing Individual Files

With zipfile , not only can it compress directories, but it can also compress individual files. Let's say you have a file called document.txt that you want to compress. Here's how you'd do that:

In this code, we're creating a new zip archive named compressed_file.zip and just adding document.txt to it. The 'w' parameter means that we're opening the zip file in write mode.

Now, if you check your directory, you should see a new zip file named compressed_file.zip .

- Extracting Zip Files

And finally, let's see how to reverse this zipping by extracting the files. Let's say we want to extract the document.txt file we just compressed. Here's how to do it:

In this code snippet, we're opening the zip file in read mode ('r') and then calling the extractall() method. This method extracts all the files in the zip archive to the current directory.

Note: If you want to extract the files to a specific directory, you can pass the directory path as an argument to the extractall() method like so: myzip.extractall('/path/to/directory/') .

Now, if you check your directory, you should see the document.txt file. That's all there is to it!

In this guide, we focused on creating and managing zip archives in Python. We explored the zipfile module, learned how to create a zip archive of a directory, and even dove into handling nested directories and large directories. We've also covered error handling within zipfile, common errors and their solutions, compressing individual files, and extracting zip files.

You might also like...

- Hidden Features of Python

- Python Docstrings

- Handling Unix Signals in Python

- The Best Machine Learning Libraries in Python

- Guide to Sending HTTP Requests in Python with urllib3

Improve your dev skills!

Get tutorials, guides, and dev jobs in your inbox.

No spam ever. Unsubscribe at any time. Read our Privacy Policy.

In this article

Monitor with Ping Bot

Reliable monitoring for your app, databases, infrastructure, and the vendors they rely on. Ping Bot is a powerful uptime and performance monitoring tool that helps notify you and resolve issues before they affect your customers.

Vendor Alerts with Ping Bot

Get detailed incident alerts about the status of your favorite vendors. Don't learn about downtime from your customers, be the first to know with Ping Bot.

© 2013- 2024 Stack Abuse. All rights reserved.

Zip and unzip files with zipfile and shutil in Python

In Python, the zipfile module allows you to zip and unzip files, i.e., compress files into a ZIP file and extract a ZIP file.

- zipfile — Work with ZIP archives — Python 3.11.4 documentation

You can also easily zip a directory (folder) and unzip a ZIP file using the make_archive() and unpack_archive() functions from the shutil module.

- shutil - Archiving operations — High-level file operations — Python 3.11.4 documentation

Zip a directory (folder): shutil.make_archive()

Unzip a zip file: shutil.unpack_archive(), basics of the zipfile module: zipfile objects, create a new zipfile object, add files with the write() method, add existing files with the write() method, create and add a new file with the open() method, check the list of files in a zip file, extract individual files from a zip file, read files in a zip file, execute command with subprocess.run().

See the following article on the built-in zip() function.

- zip() in Python: Get elements from multiple lists

All sample code in this article assumes that the zipfile and shutil modules have been imported. They are included in the standard library, so no additional installation is required.

You can zip a directory (folder) into a ZIP file using shutil.make_archive() .

- shutil.make_archive() — High-level file operations — Python 3.11.4 documentation

Here are the first three arguments for shutil.make_archive() in order:

- base_name : Path for the ZIP file to be created (without extension)

- format : Archive format. Options are 'zip' , 'tar' , 'gztar' , 'bztar' , and 'xztar'

- root_dir : Path of the directory to compress

For example, suppose there is a directory dir_zip with the following structure in the current directory.

Compress this directory into a ZIP file archive_shutil.zip in the current directory.

In this case, the specified directory dir_zip itself is not included in archive_shutil.zip .

If you want to include the directory itself, specify the parent directory of the target for the third argument root_dir , and the relative path from root_dir to the target directory for the fourth argument base_dir .

- shutil - Archiving example with base_dir — High-level file operations — Python 3.11.4 documentation

See the next section for the result of unzipping.

You can unzip a ZIP file and extract all its contents using shutil.unpack_archive() .

- shutil.unpack_archive() — High-level file operations — Python 3.11.4 documentation

The first argument filename is the path of the ZIP file, and the second argument extract_dir is the path of the target directory where the archive is extracted.

If extract_dir is omitted, the archive is extracted to the current directory.

Here, extract the ZIP file compressed in the previous section.

It is extracted as follows:

While the documentation doesn't explicitly mention it, it appears that a new directory is created even if extract_dir doesn't exist (as confirmed in Python 3.11.4).

The ZIP file created by shutil.make_archive() with base_dir is extracted as follows:

The zipfile module provides the ZipFile class, which allows you to create, read, and write ZIP files.

- zipfile - ZipFile Objects — Work with ZIP archives — Python 3.11.4 documentation

The constructor zipfile.ZipFile(file, mode, ...) is used to create ZipFile objects. Here, file represents the path of a ZIP file, and mode can be 'r' for read, 'w' for write, or 'a' for append.

The ZipFile object needs to be closed with the close() method, but if you use the with statement, it is closed automatically when the block is finished.

The usage is similar to reading and writing files with the built-in open() function; you can specify the mode and use the with statement.

- Read, write, and create files in Python (with and open())

Specific examples are described in the following sections.

Compress individual files into a ZIP file

To compress individual files into a ZIP file, create a new ZipFile object and add the files you want to compress with the write() method.

With zipfile.ZipFile() , provide the path of the ZIP file you want to create as the first argument file and set the second argument mode to 'w' for writing.

In write mode, you can specify the compression method and level using the compression and compresslevel arguments. The available options for compression are as follows; BZIP2 and LZMA have a higher compression ratio, but it takes longer to compress.

- zipfile.ZIP_STORED : No compression (default)

- zipfile.ZIP_DEFLATED : Usual ZIP compression

- zipfile.ZIP_BZIP2 : BZIP2 compression

- zipfile.ZIP_LZMA : LZMA compression

For ZIP_DEFLATED , compresslevel corresponds to the level of zlib.compressobj() . The default is -1 ( Z_DEFAULT_COMPRESSION ).

level is the compression level – an integer from 0 to 9 or -1 . A value of 1 (Z_BEST_SPEED) is fastest and produces the least compression, while a value of 9 (Z_BEST_COMPRESSION) is slowest and produces the most. 0 (Z_NO_COMPRESSION) is no compression. The default value is -1 (Z_DEFAULT_COMPRESSION). Z_DEFAULT_COMPRESSION represents a default compromise between speed and compression (currently equivalent to level 6). zlib.compressobj() — Compression compatible with gzip — Python 3.11.4 documentation

- cpython/Lib/zipfile.py at v3.11.4 · python/cpython · GitHub

To add a file to the ZipFile object, use the write() method.

- zipfile.ZipFile.write() — Work with ZIP archives — Python 3.11.4 documentation

The first argument filename is the path to the file to be added. The second argument arcname specifies the name of the file in the ZIP archive; if arcname is omitted, the name of filename is used. In addition, arcname can be used to define a directory structure.

You can also select a compression method and level for each file by specifying compress_type and compresslevel in the write() method.

Add other files to an existing ZIP file

To append additional files to an existing ZIP file, create a ZipFile object in append mode. Specify the path of the existing ZIP file for the first argument file and 'a' (append) for the second argument mode of zipfile.ZipFile() .

You can add existing files with the write() method of the ZipFile object.

The following is an example of adding another_file.txt in the current directory. The argument arcname is omitted.

You can also create a new file within the ZIP and add content to it. Create the ZipFile object in append mode ( 'a' ) and use its open() method.

- zipfile.ZipFile.open() — Work with ZIP archives — Python 3.11.4 documentation

Specify the path of the file you want to create within the ZIP as the first argument, and set the second argument mode to 'w' of the open() method.

You can write the contents with the write() method of the opened file object.

The argument for the write() method should be specified in bytes , not str . To write text, either use the b'...' notation or convert the string using its encode() method.

An example of reading a file from a ZIP using the open() method of the ZipFile object will be described later.

To check the contents of a ZIP file, create a ZipFile object in read mode ( 'r' , default).

You can get a list of archived items with the namelist() method of the ZipFile object.

- zipfile.ZipFile.namelist() — Work with ZIP archives — Python 3.11.4 documentation

As seen from the above results, ZIP files created with shutil.make_archive() list directories individually. The same behavior is observed with ZIP files compressed using the standard function of the Finder on a Mac.

You can exclude directories with list comprehensions.

- List comprehensions in Python

To unzip a ZIP file, create a ZipFile object in read mode ( 'r' , default).

If you want to extract only specific files, use the extract() method.

- zipfile.ZipFile.extract() — Work with ZIP archives — Python 3.11.4 documentation

The first argument member specifies the name of the file to be extracted (including its directory structure within the ZIP file), and the second argument path specifies the directory to extract the file.

If you want to extract all files, use the extractall() method. Specify the path of the directory to extract to as the first argument path .

- zipfile.ZipFile.extractall() — Work with ZIP archives — Python 3.11.4 documentation

In both cases, if path is omitted, files are extracted to the current directory. Although the documentation doesn't specify it, it seems to create a new directory even if path is non-existent (confirmed in Python 3.11.4).

You can directly read files in a ZIP file.

Create a ZipFile object in read mode (default) and open the file inside with the open() method.

The first argument of open() is the name of a file in the ZIP (it may include the directory). The second argument mode can be omitted since the default value is 'r' (read).

You can read the contents using the read() method of the opened file object. The method returns a byte string ( bytes ), which can be converted to a string ( str ) using the decode() method.

Besides read() , you can also use readline() and readlines() with the file object, just like when using the built-in open() function.

ZIP with passwords (encryption and decryption)

The zipfile module supports decryption of password-protected (encrypted) ZIP files, but it does not support encryption.

It supports decryption of encrypted files in ZIP archives, but it currently cannot create an encrypted file. Decryption is extremely slow as it is implemented in native Python rather than C. zipfile — Work with ZIP archives — Python 3.11.4 documentation

Also, AES is not supported.

The zipfile module from the Python standard library supports only CRC32 encrypted zip files (see here: http://hg.python.org/cpython/file/71adf21421d9/Lib/zipfile.py#l420 ). zip - Python unzip AES-128 encrypted file - Stack Overflow

Both shutil.make_archive() and shutil.unpack_archive() do not support encryption or decryption.

The pyzipper library, as introduced on Stack Overflow above, supports AES encryption and decryption and can be used similarly to the zipfile module.

- danifus/pyzipper: Python zipfile extensions

To create a ZIP file with a password, specify encryption=pyzipper.WZ_AES with pyzipper.AESZipFile() and set the password with the setpassword() method. Note that you need to specify the password as a byte string ( bytes ).

The following is an example of unzipping a ZIP file with a password.

Of course, if the password is wrong, it cannot be decrypted.

The zipfile module also allows you to specify a password, but as mentioned above, it does not support AES.

If zipfile or pyzipper don't work for your needs, you can use subprocess.run() to handle the task using command-line tools.

- subprocess.run() — Subprocess management — Python 3.11.4 documentation

For example, use the 7z command of 7-zip (installation required).

Equivalent to the following commands. -x is expansion. Note that -p<password> and -o<directory> do not require spaces.

Related Categories

Related articles.

- Copy a file/directory in Python (shutil.copy, shutil.copytree)

- Get a list of file and directory names in Python

- Delete a file/directory in Python (os.remove, shutil.rmtree)

- Get the filename, directory, extension from a path string in Python

- How to use pathlib in Python

- Check if a file/directory exists in Python (os.path.exists, isfile, isdir)

- Get the path of the current file (script) in Python: __file__

- Move a file/directory in Python (shutil.move)

- Create a directory with mkdir(), makedirs() in Python

- Get and change the current working directory in Python

- How to use glob() in Python

- Get the file/directory size in Python (os.path.getsize)

- NumPy: Calculate the absolute value element-wise (np.abs, np.fabs)

- Save still images from camera video with OpenCV in Python

- Contributors

Zip File Operations

Table of contents, how to zip a file in python, unzip a file in python.

Zip files are a popular way to compress and bundle multiple files into a single archive. They are commonly used for tasks such as file compression, data backup, and file distribution. Zipping or compressing files in Python is a useful way to save space on your hard drive and make it easier to transfer files over the internet.

The zipfile module in Python provides functionalities to create, read, write, append, and extract ZIP files.

Zip a Single File

You can use the zipfile module to create a zip file containing a single file. Here is how you can do it:

In the above code, we first imported the zipfile module. Then we defined the name of the zip file and the name of the source file. We created a ZipFile object and added the source file to it using the write() method. We then closed the zip file using the close() method.

Zip Multiple Files

You can also create a zip file containing multiple files. Here is an example:

In the above example, we defined the names of multiple source files in a list. We then added each of these files to the zip file using a for loop and the write() method. Finally, we closed the zip file using the close() method.

To compress the zip file even further, you can set the compress_type argument to zipfile.ZIP_DEFLATED . This applies the DEFLATE compression method to the files being zipped.

It is straightforward to extract zip files in Python using the zipfile module. Here are two ways to do it:

In this example, we first import the zipfile module. We then create an instance of the ZipFile class for the zip file we want to extract. The r argument indicates that we want to read from the zip file, and myzipfile.zip is the name of the file we want to extract.

The extractall() method extracts all files from the zip file and saves them into the specified destination_folder . If destination_folder does not exist, it will be created.

In this example, we again import the zipfile module and create an instance of the ZipFile class. We then loop through all files in the zip file using namelist() . If a file has a .txt extension, we extract it to destination_folder .

By using these two code examples, you can easily extract files from zip files in Python. Remember to adjust the file paths and naming to fit your specific needs.

Contribute with us!

Do not hesitate to contribute to Python tutorials on GitHub: create a fork, update content and issue a pull request.

Learn Python Programming from Scratch

- Learn Python

Python Zip | Zipping Files With Python

FREE Online Courses: Your Passport to Excellence - Start Now

Zip is one of the most widely used archive file formats. Since it reconstructs the compressed data perfectly, it supports lossless compression. A zip file can either be a single file or a large directory with multiple files and sub-directories. Its ability to reduce a file size makes it an ideal tool for storing and transferring large-size files. It is most commonly used in sending mails. To make working with zip files feasible and easier, Python contains a module called zipfile. With the help of zipfile, we can open, extract, and modify zip files quickly and simply. let’s learn about Python Zip!!

Viewing the Members of Python Zip File

All files and directories inside a zip file are called members. We can view all the members in a zip file by using the printdir() method. The method also prints the last modified time and the size of each member.

Example of using prindir() method in Python

In the above code example, Since we used the with statement, we don’t need to worry about opening and closing the zip file.

Zipping a File in Python

We can zip a specific file by using the write() method from the zipfile module.

Example of zipping a file in Python

Zipping All Files in Python Directory

To zip all files in a directory, we need to traverse every file in the directory and zip one by one individually using the zipfile module.

We can use the walk() function from the os module to traverse through the directory.

Example of zipping all files in a directory in Python

Although the above code does the job, there is a more efficient way of zipping an entire directory. To do this, we use the shutil module instead of zipfile module.

Extracting a File from Python Zip File

The module zipfile contains the method extract() to extract a certain file from the zip file.

Example of extracting a file from the zip file in Python

Extracting All Files from the Zip Directory

Instead of extracting one file at a time, we can extract all files at once by using the method extractall() from the zipfile module.

Example of extracting all files from the zip directory in Python

Accessing the Info of a Zip File

We can use infolist() method from the zipfile module to get information on a zip file.

The method returns a list containing the ZipInfo objects of members in the zip file.

Example of using infolist() in Python

Adding a File to an Existing Zip File

Opening the zip file in append mode ‘a’ instead of write mode ‘w’ enables us to add files to the zip file without replacing the existing files.

Example of adding files to an existing zip file in Python

Checking Whether a File is Zip File in Python

The function is_zipfile() is used to check whether a file is a zip file or not.

We need to pass the filename of the file that we want to check.

It returns True if the passed file is zip, otherwise, it returns False.

Example of using is_zipfile() in Python

Methods of Python ZipFile Object

The function is used to view all files in a zip file. It returns a list containing the names of all files in a zip file including the files present in the sub-directories.

Example of using namelist() in Python

setpassword(pwd)

The function is used to set a password to a zip file. We need to pass a byte string containing the password. The passed password will be set to the zip file. It has no return value.

Example of using setpassword() in Python

This function verifies all of the files in the archive for CRCs and file headers, then provides the name of the first problematic file. It returns None if there isn’t any.

Example of using testzip() in Python

The function is used to write a new file with new data intp a zip file. We need to pass a string containing the file name and a string or byte string containing the data of the new file. The passed file containing the passed data will be created in the zip file. It has no return value.

Example of using writestr() in Python

Attributes of Python ZipFile Object

The attribute returns an integer containing the level of debug output we need to use. The returned value 0 represents no output while the 3 represents most output.

Example of using debug in Python

The attribute returns a byte string containing the comment supplied with the zip file.

Example of using comment in Python

Python Interview Questions on Python Zip Files

Q1. Write a program to print all the contents of the zip file ‘EmployeeReport.zip’.

Ans 1. Complete code is as follows:

Q2. Write a program to zip a directory ‘EmployeeReport’ without using any loops.

Ans 2. Complete code is as follows:

Q3. Write a function to check whether a list of files is zip files or not. The function should return True if all files in the list are zip files, otherwise, it should return False.

Ans 3. Complete code is as follows:

Q4. Write a program to extract the zip file ‘EmployeeReport.zip’.

Ans 4. Complete code is as follows:

Q5. Write a program to add three files to an existing zip file ‘EmployeeReport.zip’. The three files are: ‘file1.txt’, ‘file2.txt’, and ‘file3.txt’.

Ans 5. Complete code is as follows:

Quiz on Zip Files in Python

Quiz summary.

0 of 10 Questions completed

Information

You have already completed the quiz before. Hence you can not start it again.

Quiz is loading…

You must sign in or sign up to start the quiz.

You must first complete the following:

Quiz complete. Results are being recorded.

0 of 10 Questions answered correctly

Time has elapsed

You have reached 0 of 0 point(s), ( 0 )

Earned Point(s): 0 of 0 , ( 0 ) 0 Essay(s) Pending (Possible Point(s): 0 )

- Not categorized 0%

- Review / Skip

1 . Question

The files and directories in a zip file are called ____.

- Zip directories

- Member files

2 . Question

What module is generally used for working with zip files in Python?

- Zipfile module

- Shutil module

3 . Question

What function is used to view all contents of a zip file?

4 . Question

What function is used to set a password to a zip file?

- setpassword()

- getpassword()

- Both A and B

5 . Question

_____ attribute is used to view the comment of a zip file.

- getcomment()

6 . Question

What function is used to extract a single from a zip file?

- extractone()

- extractall()

- extractfile()

7 . Question

What function is used to extract all files from a zip file?

8 . Question

from zipfile import ZipFile

file = “students.zip”

with ZipFile(file, ‘r’) as zip:

print(zip.namelist())

- The code prints a list of only directories in the zip file

- The code prints a list of names of all files in a zip file